(PHP 4 >= 4.0.3, PHP 5, PHP 7, PHP

curl_error — Возвращает строку с описанием последней ошибки текущего сеанса

Описание

Список параметров

-

handle -

Дескриптор cURL, полученный из curl_init().

Возвращаемые значения

Возвращает сообщение об ошибке или '' (пустую строку),

если ошибки не произошло.

Список изменений

| Версия | Описание |

|---|---|

| 8.0.0 |

handle теперь ожидает экземпляр CurlHandle;раньше, ожидался ресурс (resource). |

Примеры

Пример #1 Пример использования curl_error()

<?php

// Создаём дескриптор curl к несуществующему адресу

$ch = curl_init('http://404.php.net/');

curl_setopt($ch, CURLOPT_RETURNTRANSFER, true);

if(

curl_exec($ch) === false)

{

echo 'Ошибка curl: ' . curl_error($ch);

}

else

{

echo 'Операция завершена без каких-либо ошибок';

}// Закрываем дескриптор

curl_close($ch);

?>

patrick at ibuildings dot nl ¶

9 years ago

If you want to fetch the error message, make sure you fetch it before you close the current cURL session or the error message will be reset to an empty string.

paul at paulmcgarry dot com ¶

14 years ago

For a 404 response to actually trigger an error as the example seems to be trying to demonstrate the following option should be set:

curl_setopt($ch,CURLOPT_FAILONERROR,true);

As per http://curl.haxx.se/libcurl/c/libcurl-errors.html

CURLE_HTTP_RETURNED_ERROR (22)

This is returned if CURLOPT_FAILONERROR is set TRUE and the HTTP server returns an error code that is >= 400. (This error code was formerly known as CURLE_HTTP_NOT_FOUND.)

anrdaemon at freemail dot ru ¶

3 years ago

curl_error is not a textual representation of curl_errno.

It's an actual error *message*.

If you want textual representation of error *code*, look for curl_strerror.

Anonymous ¶

2 years ago

If you're using curl_multi and there's an error, curl_error() will remain empty until you've called curl_multi_info_read(). That function "pumps" the information inside the curl libraries to the point where curl_error() will return a useful string.

This should really be added to the documentation, because it's not at all obvious.

you can generate curl error after its execution

$url = 'http://example.com';

$ch = curl_init($url);

curl_setopt($ch, CURLOPT_RETURNTRANSFER, true);

$result = curl_exec($ch);

if(curl_errno($ch)){

echo 'Request Error:' . curl_error($ch);

}

and here are curl error code

if someone need more information about curl errors

<?php

$error_codes=array(

[1] => 'CURLE_UNSUPPORTED_PROTOCOL',

[2] => 'CURLE_FAILED_INIT',

[3] => 'CURLE_URL_MALFORMAT',

[4] => 'CURLE_URL_MALFORMAT_USER',

[5] => 'CURLE_COULDNT_RESOLVE_PROXY',

[6] => 'CURLE_COULDNT_RESOLVE_HOST',

[7] => 'CURLE_COULDNT_CONNECT',

[8] => 'CURLE_FTP_WEIRD_SERVER_REPLY',

[9] => 'CURLE_REMOTE_ACCESS_DENIED',

[11] => 'CURLE_FTP_WEIRD_PASS_REPLY',

[13] => 'CURLE_FTP_WEIRD_PASV_REPLY',

[14]=>'CURLE_FTP_WEIRD_227_FORMAT',

[15] => 'CURLE_FTP_CANT_GET_HOST',

[17] => 'CURLE_FTP_COULDNT_SET_TYPE',

[18] => 'CURLE_PARTIAL_FILE',

[19] => 'CURLE_FTP_COULDNT_RETR_FILE',

[21] => 'CURLE_QUOTE_ERROR',

[22] => 'CURLE_HTTP_RETURNED_ERROR',

[23] => 'CURLE_WRITE_ERROR',

[25] => 'CURLE_UPLOAD_FAILED',

[26] => 'CURLE_READ_ERROR',

[27] => 'CURLE_OUT_OF_MEMORY',

[28] => 'CURLE_OPERATION_TIMEDOUT',

[30] => 'CURLE_FTP_PORT_FAILED',

[31] => 'CURLE_FTP_COULDNT_USE_REST',

[33] => 'CURLE_RANGE_ERROR',

[34] => 'CURLE_HTTP_POST_ERROR',

[35] => 'CURLE_SSL_CONNECT_ERROR',

[36] => 'CURLE_BAD_DOWNLOAD_RESUME',

[37] => 'CURLE_FILE_COULDNT_READ_FILE',

[38] => 'CURLE_LDAP_CANNOT_BIND',

[39] => 'CURLE_LDAP_SEARCH_FAILED',

[41] => 'CURLE_FUNCTION_NOT_FOUND',

[42] => 'CURLE_ABORTED_BY_CALLBACK',

[43] => 'CURLE_BAD_FUNCTION_ARGUMENT',

[45] => 'CURLE_INTERFACE_FAILED',

[47] => 'CURLE_TOO_MANY_REDIRECTS',

[48] => 'CURLE_UNKNOWN_TELNET_OPTION',

[49] => 'CURLE_TELNET_OPTION_SYNTAX',

[51] => 'CURLE_PEER_FAILED_VERIFICATION',

[52] => 'CURLE_GOT_NOTHING',

[53] => 'CURLE_SSL_ENGINE_NOTFOUND',

[54] => 'CURLE_SSL_ENGINE_SETFAILED',

[55] => 'CURLE_SEND_ERROR',

[56] => 'CURLE_RECV_ERROR',

[58] => 'CURLE_SSL_CERTPROBLEM',

[59] => 'CURLE_SSL_CIPHER',

[60] => 'CURLE_SSL_CACERT',

[61] => 'CURLE_BAD_CONTENT_ENCODING',

[62] => 'CURLE_LDAP_INVALID_URL',

[63] => 'CURLE_FILESIZE_EXCEEDED',

[64] => 'CURLE_USE_SSL_FAILED',

[65] => 'CURLE_SEND_FAIL_REWIND',

[66] => 'CURLE_SSL_ENGINE_INITFAILED',

[67] => 'CURLE_LOGIN_DENIED',

[68] => 'CURLE_TFTP_NOTFOUND',

[69] => 'CURLE_TFTP_PERM',

[70] => 'CURLE_REMOTE_DISK_FULL',

[71] => 'CURLE_TFTP_ILLEGAL',

[72] => 'CURLE_TFTP_UNKNOWNID',

[73] => 'CURLE_REMOTE_FILE_EXISTS',

[74] => 'CURLE_TFTP_NOSUCHUSER',

[75] => 'CURLE_CONV_FAILED',

[76] => 'CURLE_CONV_REQD',

[77] => 'CURLE_SSL_CACERT_BADFILE',

[78] => 'CURLE_REMOTE_FILE_NOT_FOUND',

[79] => 'CURLE_SSH',

[80] => 'CURLE_SSL_SHUTDOWN_FAILED',

[81] => 'CURLE_AGAIN',

[82] => 'CURLE_SSL_CRL_BADFILE',

[83] => 'CURLE_SSL_ISSUER_ERROR',

[84] => 'CURLE_FTP_PRET_FAILED',

[84] => 'CURLE_FTP_PRET_FAILED',

[85] => 'CURLE_RTSP_CSEQ_ERROR',

[86] => 'CURLE_RTSP_SESSION_ERROR',

[87] => 'CURLE_FTP_BAD_FILE_LIST',

[88] => 'CURLE_CHUNK_FAILED');

?>

I’m using curl at the command line on Linux to issue HTTP requests. The response bodies are printed to standard out, which is fine, but I can’t see from the man page how to get curl to print the HTTP status code from the response (404, 403 etc). Is this possible?

asked Apr 18, 2011 at 10:28

4

This should work for you if the web server is able to respond to HEAD requests (this will not perform a GET request):

curl -I http://www.example.org

As an addition, to let cURL follow redirects (3xx statuses) add -L.

Wouter

1291 silver badge7 bronze badges

answered Apr 18, 2011 at 10:56

pberlijnpberlijn

9,1501 gold badge14 silver badges8 bronze badges

11

A more specific way to print out just the HTTP status code is something along the lines of:

curl -s -o /dev/null -w "%{http_code}" http://www.example.org/

A lot easier to work with in scripts, as it doesn’t require any parsing

The parameter -I might be added to improve response load performance. This will change the call to a HEAD call which will fetch response overhead only, without the body.

Note: %{http_code} returns on first line of HTTP payload (available variables for the -w option on the curl documentation page)

i.e.:

curl -s -o /dev/null -I -w "%{http_code}" http://www.example.org/

answered Jun 28, 2012 at 0:25

pvandenberkpvandenberk

13.6k2 gold badges13 silver badges3 bronze badges

21

You can print the status code, in addition to all the headers by doing the following:

curl -i http://example.org

The good thing about -i is that it works with -X POST as well.

answered Dec 4, 2012 at 20:45

Cyril DavidCyril David

4,4271 gold badge12 silver badges2 bronze badges

8

If you want to see the header as well as the result you can use the verbose option:

curl -v http://www.example.org

curl --verbose http://www.example.org

The status will appear in the header. E.g.

< Date: Tue, 04 Nov 2014 19:12:59 GMT

< Content-Type: application/json; charset=utf-8

< Status: 422 Unprocessable Entity

Dennis

4425 silver badges14 bronze badges

answered May 3, 2012 at 4:28

Enrico SusatyoEnrico Susatyo

3,4362 gold badges18 silver badges20 bronze badges

4

If you want to capture the HTTP status code in a variable, but still redirect the content to STDOUT, you must create two STDOUTs. You can do so with process substitution >() and command substitution $().

First, create a file descriptor 3 for your current process’ STDOUT with exec 3>&1.

Then, use curl’s -o option to redirect the response content to a temporary fifo using command substitution, and then within that command substitution, redirect output back to your current process STDOUT file descriptor 3 with -o >(cat >&3).

Putting it all together in bash 3.2.57(1)-release (standard for macOS):

# creates a new file descriptor 3 that redirects to 1 (STDOUT)

exec 3>&1

# Run curl in a separate command, capturing output of -w "%{http_code}" into HTTP_STATUS

# and sending the content to this command's STDOUT with -o >(cat >&3)

HTTP_STATUS=$(curl -w "%{http_code}" -o >(cat >&3) 'http://example.com')

Note that this doesn’t work in /bin/sh as SamK noted in the comments below.

answered Jan 8, 2015 at 20:59

8

Redefine curl output:

curl -sw '%{http_code}' http://example.org

Can be used with any request type.

answered Aug 5, 2014 at 18:18

2

Status code ONLY

[0]$ curl -LI http://www.example.org -o /dev/null -w '%{http_code}n' -s

[0]$ 200

All credit to this GIST

answered Feb 8, 2017 at 10:44

mahatmanichmahatmanich

6255 silver badges7 bronze badges

1

This is a painful curl --fail limitation. From man curl :

-f, —fail

(HTTP) Fail silently (no output at all) on server errors

But there is no way to get both the non-zero return code AND the response body in stdout.

Based on pvandenberk’s answer and this other very useful trick learned on SO, here is a workaround :

curl_with_error_code () {

_curl_with_error_code "$@" | sed '$d'

}

_curl_with_error_code () {

local curl_error_code http_code

exec 17>&1

http_code=$(curl --write-out 'n%{http_code}n' "$@" | tee /dev/fd/17 | tail -n 1)

curl_error_code=$?

exec 17>&-

if [ $curl_error_code -ne 0 ]; then

return $curl_error_code

fi

if [ $http_code -ge 400 ] && [ $http_code -lt 600 ]; then

echo "HTTP $http_code" >&2

return 127

fi

}

This function behaves exactly as curl, but will return 127 (a return code non-used by curl) in case of a HTTP code in the range [400, 600[.

answered Apr 6, 2016 at 13:08

Lucas CimonLucas Cimon

4724 silver badges11 bronze badges

3

This will send a request to url, get only the first line of the response, split it on blocks and select the second one.

It contains the response code

curl -I http://example.org 2>/dev/null | head -n 1 | cut -d$' ' -f2

answered Jul 15, 2015 at 20:08

2

For a POST request, the following worked:

curl -w 'RESP_CODE:%{response_code}' -s -X POST --data '{"asda":"asd"}' http://example.com --header "Content-Type:application/json"|grep -o 'RESP_CODE:[1-4][0-9][0-9]'

answered Jan 7, 2016 at 8:36

zafar142003zafar142003

2512 silver badges4 bronze badges

Use the following cURL command and pipe it to grep like so:

$ curl -I -s -L http://example.com/v3/get_list | grep «HTTP/1.1»

Here’s what each flag does:

-I: Show only response headers-s: Silent — Don’t show progress bar-L: FollowLocation:headers

Here is a link to HTTP status codes.

Run from the command line. This curl runs in silent mode, follows any redirects, get the HTTP headers. grep will print the HTTP status code to standard output.

Cas

1,9142 gold badges18 silver badges42 bronze badges

answered Nov 21, 2016 at 11:28

Here is some curl command that is using GET and that returns the HTTP code.

curl -so /dev/null -w '%{response_code}' http://www.example.org

Please remember that the approach below is using HEAD, which is faster but it may not work well with some web less compliant HTTP servers.

curl -I http://www.example.org

answered Jun 23, 2016 at 10:37

sorinsorin

11.4k20 gold badges62 silver badges73 bronze badges

2

curl -so -i /dev/null -w "%{http_code}" http://www.any_example.com

This will return the following information:

- response data, if any data is returned by API like error

- status code

answered Mar 8, 2017 at 5:12

sranasrana

611 silver badge2 bronze badges

2

An example of how to use the response codes. I use this to re-download Geolite databases only if they have changed (-z) & also following redirects (-L):

url=http://example.com/file.gz

file=$(basename $url)

response=$(curl -L -s -o $file -z $file $url -w "%{http_code}")

case "$response" in

200) do_something ;;

301) do_something ;;

304) printf "Received: HTTP $response (file unchanged) ==> $urln" ;;

404) printf "Received: HTTP $response (file not found) ==> $urln" ;;

*) printf "Received: HTTP $response ==> $urln" ;;

esac

answered Apr 1, 2018 at 17:21

Split output content to stdout and HTTP status code to stderr:

curl http://www.example.org -o >(cat >&1) -w "%{http_code}n" 1>&2

If only HTTP status code is desired to stderr, --silent can be used:

curl --silent http://www.example.org -o >(cat >&1) -w "%{http_code}n" 1>&2

The desired stream can then be picked by redirecting unwanted one to /dev/null:

$ (curl --silent http://www.example.org -o >(cat >&1) -w "%{http_code}" 1>&2) 1>/dev/null

200

$ (curl --silent http://www.example.org -o >(cat >&1) -w "%{http_code}" 1>&2) 2>/dev/null

<!doctype html>

...

Note that for the second redirection to behave as desired, we need to run the curl command in subshell.

answered Jun 4, 2019 at 8:08

JaakkoJaakko

3102 silver badges12 bronze badges

2

The OP wants to know the status code. Often when downloading a file you also want to get a feel of it’s size so I’m using curl first to show status code and size of file and then shut off verbose and direct file to the place and name I want:

curl -R -s -S -w "nhttp: %{http_code} %{size_download}n" -o /Users/myfiles/the_local_name.html http://archive.onweb.com/the_online_name.html

Then I wait for the finishing of curl

wait ${!}

before I run the next command. The above when used in a script of many commands like above gives a nice response like:

http: 200 42824

http: 200 34728

http: 200 35452

Please note that -o in curl needs to be followed by the full path of the file + name of file. This allows you thusly to save files in a sensible name structure when you d/l them with curl. Also note that -s and -S used together silence the output but does show errors. Note also that -R tries to set the file timestamp to that of the web file.

My answer is based on what @pvandenberk originally suggested, but in addition it actually saves the file somewhere, instead of merely directing to /dev/null.

answered Oct 7, 2017 at 7:32

$ curl -kv https://www.example.org 2>&1 | grep -i 'HTTP/1.1 ' | awk '{print $3}'| sed -e 's/^[ t]*//'

- 2>&1: error is stored in output for parsing

- grep: filter the response code line from output

- awk: filters out the response code from response code line

- sed: removes any leading white spaces

answered Apr 14, 2021 at 4:44

There is another way by using Powershell command which is alias to curl.exe

Just type the following:

(Invoke-WebRequest -Uri https://your.website).StatusCode

answered Jul 14, 2022 at 10:30

In Windows PowerShell:

curl https:\www.example.org -Method HEAD

It’s really just an alias for Invoke-WebRequest though.

answered Sep 14, 2022 at 18:24

KebmanKebman

5072 gold badges5 silver badges13 bronze badges

cURL is a command line tool and a library which can be used to receive and send data between a client and a server or any two machines connected over the internet. It supports a wide range of protocols like HTTP, FTP, IMAP, LDAP, POP3, SMTP and many more.

Due to its versatile nature, cURL is used in many applications and for many use cases. For example, the command line tool can be used to download files, testing APIs and debugging network problems. In this article, we shall look at how you can use the cURL command line tool to perform various tasks.

Contents

- 1 Install cURL

- 1.1 Linux

- 1.2 MacOS

- 1.3 Windows

- 2 cURL basic usage

- 3 Downloading Files with cURL

- 4 Anatomy of a HTTP request/response

- 5 Following redirects with cURL

- 6 Viewing response headers with cURL

- 7 Viewing request headers and connection details

- 8 Silencing errors

- 9 Setting HTTP request headers with cURL

- 10 Making POST requests with cURL

- 11 Submitting JSON data with cURL

- 12 Changing the request method

- 13 Replicating browser requests with cURL

- 14 Making cURL fail on HTTP errors

- 15 Making authenticated requests with cURL

- 16 Testing protocol support with cURL

- 17 Setting the Host header and cURL’s —resolve option

- 18 Resolve domains to IPv4 and IPv6 addresses

- 19 Disabling cURL’s certificate checks

- 20 Troubleshooting website issues with “cURL timing breakdown”

- 21 cURL configuration files

- 22 Conclusion

Install cURL

Linux

Most Linux distributions have cURL installed by default. To check whether it is installed on your system or not, type curl in your terminal window and press enter. If it isn’t installed, it will show a “command not found” error. Use the commands below to install it on your system.

For Ubuntu/Debian based systems use:

sudo apt update sudo apt install curl

For CentOS/RHEL systems, use:

sudo yum install curl

On the other hand, for Fedora systems, you can use the command:

sudo dnf install curl

MacOS

MacOS comes with cURL preinstalled, and it receives updates whenever Apple releases updates for the OS. However, in case you want to install the most recent version of cURL, you can install the curl Homebrew package. Once you install Homebrew, you can install it with:

brew install curl

Windows

For Windows 10 version 1803 and above, cURL now ships by default in the Command Prompt, so you can use it directly from there. For older versions of Windows, the cURL project has Windows binaries. Once you download the ZIP file and extract it, you will find a folder named curl-<version number>-mingw. Move this folder into a directory of your choice. In this article, we will assume our folder is named curl-7.62.0-win64-mingw, and we have moved it under C:.

Next, you should add cURL’s bin directory to the Windows PATH environment variable, so that Windows can find it when you type curl in the command prompt. For this to work, you need to follow these steps:

- Open the “Advanced System Properties” dialog by running

systempropertiesadvancedfrom the Windows Run dialog (Windows key + R). - Click on the “Environment Variables” button.

- Double-click on “Path” from the “System variables” section, and add the path

C:curl-7.62.0-win64-mingwbin. For Windows 10, you can do this with the “New” button on the right. On older versions of Windows, you can type in;C:curl-7.62.0-win64-mingwbin(notice the semicolon at the beginning) at the end of the “Value” text box.

Once you complete the above steps, you can type curl to check if this is working. If everything went well, you should see the following output:

C:UsersAdministrator>curl curl: try 'curl --help' or 'curl --manual' for more information

cURL basic usage

The basic syntax of using cURL is simply:

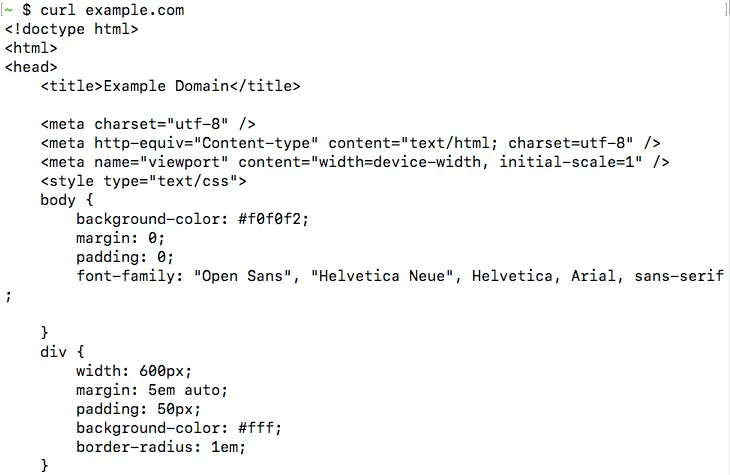

curl <url>

This fetches the content available at the given URL, and prints it onto the terminal. For example, if you run curl example.com, you should be able to see the HTML page printed, as shown below:

This is the most basic operation cURL can perform. In the next few sections, we will look into the various command line options accepted by cURL.

Downloading Files with cURL

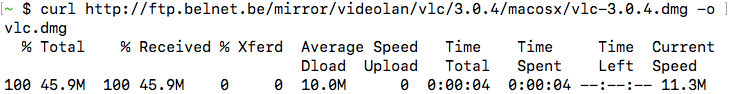

As we saw, cURL directly downloads the URL content and prints it to the terminal. However, if you want to save the output as a file, you can specify a filename with the -o option, like so:

curl -o vlc.dmg http://ftp.belnet.be/mirror/videolan/vlc/3.0.4/macosx/vlc-3.0.4.dmg

In addition to saving the contents, cURL switches to displaying a nice progress bar with download statistics, such as the speed and the time taken:

Instead of providing a file name manually, you can let cURL figure out the filename with the -O option. So, if you want to save the above URL to the file vlc-3.0.4.dmg, you can simply use:

curl -O http://ftp.belnet.be/mirror/videolan/vlc/3.0.4/macosx/vlc-3.0.4.dmg

Bear in mind that when you use the -o or the -O options and a file of the same name exists, cURL will overwrite it.

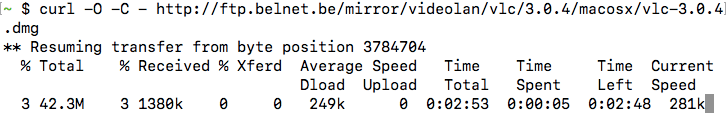

If you have a partially downloaded file, you can resume the file download with the -C - option, as shown below:

curl -O -C - http://ftp.belnet.be/mirror/videolan/vlc/3.0.4/macosx/vlc-3.0.4.dmg

Like most other command line tools, you can combine different options together. For example, in the above command, you could combine -O -C - and write it as -OC - .

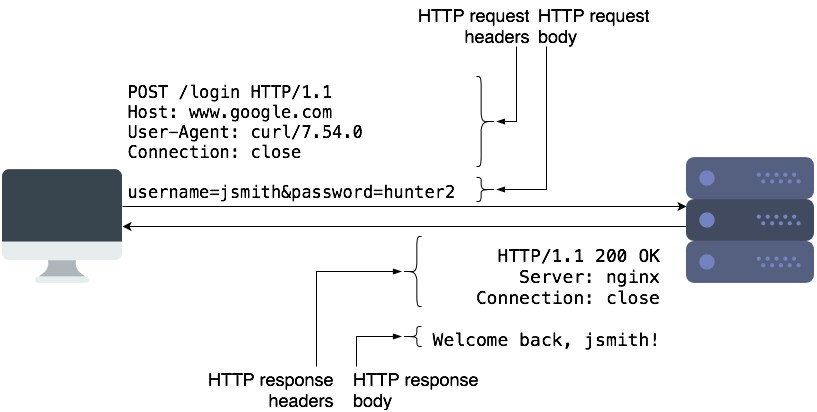

Anatomy of a HTTP request/response

Before we dig deeper into the features supported by cURL, we will discuss a little bit about HTTP requests and responses. If you are familiar with these concepts, you directly skip to the other sections.

To request a resource such as a webpage, or to submit some data to a server, a HTTP client (such as a browser or cURL) makes a HTTP request to the server The server responds back with a HTTP response, which contains the “contents” of that page.

HTTP requests contain the request method, URL, some headers, and some optional data as part of the “request body”. The request method controls how a certain request should be processed. The most common types of request methods are “GET” and “POST”. Typically, we use “GET” requests to retrieve a resource from the server, and “POST” to submit data to the server for processing. “POST” requests typically contain some data in the request body, which the server can use.

HTTP responses are similar and contain the status code, some headers, and a body. The body contains the actual data that clients can display or save to a file. The status code is a 3-digit code which tells the client if the request succeeded or failed, and how it should proceed further. Common status codes are 2xx (success), 3xx (redirect to another page), and 4xx/5xx (for errors).

HTTP is an “application layer protocol”, and it runs over another protocol called TCP. It takes care of retransmitting any lost data, and ensures that the client and server transmit data at an optimal rate. When you use HTTPS, another protocol called SSL/TLS runs between TCP and HTTP to secure the data.

Most often, we use domain names such as google.com to access websites. Mapping the domain name to an IP address occurs through another protocol called DNS.

You should now have enough background to understand the rest of this article.

Following redirects with cURL

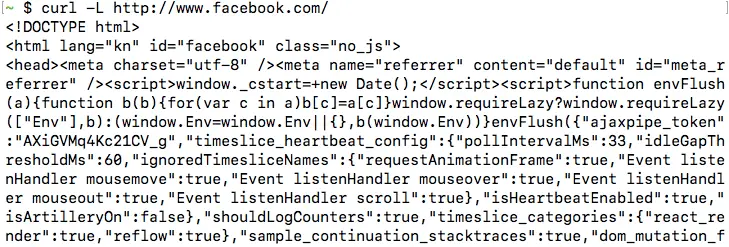

By default, when cURL receives a redirect after making a request, it doesn’t automatically make a request to the new URL. As an example of this, consider the URL http://www.facebook.com. When you make a request using to this URL, the server sends a HTTP 3XX redirect to https://www.facebook.com/. However, the response body is otherwise empty. So, if you try this out, you will get an empty output:

If you want cURL to follow these redirects, you should use the -L option. If you repeat make a request for http://www.facebook.com/ with the -L flag, like so:

curl -L http://www.facebook.com/

Now, you will be able to see the HTML content of the page, similar to the screenshot below. In the next section, we will see how we can verify that there is a HTTP 3XX redirect.

Please bear in mind that cURL can only follow redirects if the server replied with a “HTTP redirect”, which means that the server used a 3XX status code, and it used the “Location” header to indicate the new URL. cURL cannot process Javascript or HTML-based redirection methods, or the “Refresh header“.

If there is a chain of redirects, the -L option will only follow the redirects up to 500 times. You can control the number of maximum redirects that it will follow with the --max-redirs flag.

curl -L --max-redirs 700 example.com

If you set this flag to -1, it will follow the redirects endlessly.

curl -L --max-redirs -1 example.com

When debugging issues with a website, you may want to view the HTTP response headers sent by the server. To enable this feature, you can use the -i option.

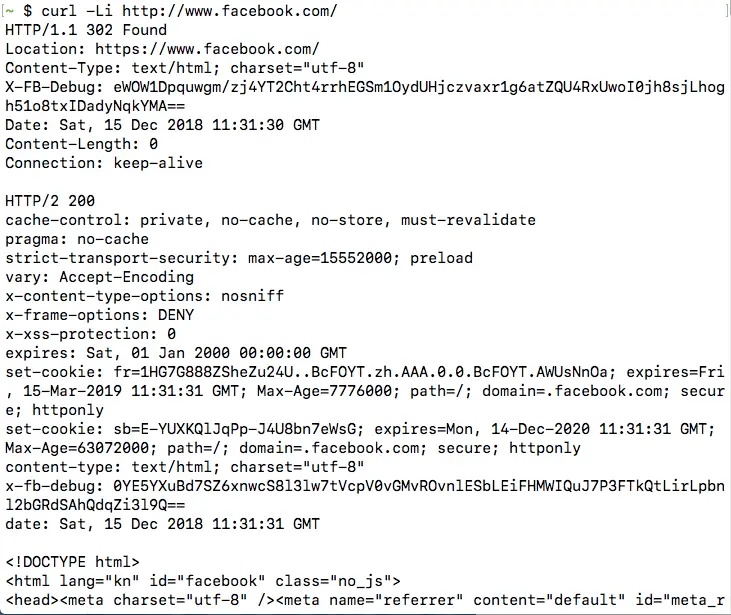

Let us continue with our previous example, and confirm that there is indeed a HTTP 3XX redirect when you make a HTTP request to http://www.facebook.com/, by running:

curl -L -i http://www.facebook.com/

Notice that we have also used -L so that cURL can follow redirects. It is also possible to combine these two options and write them as -iL or -Li instead of -L -i.

Once you run the command, you will be able to see the HTTP 3XX redirect, as well as the page HTTP 200 OK response after following the redirect:

If you use the -o/-O option in combination with -i, the response headers and body will be saved into a single file.

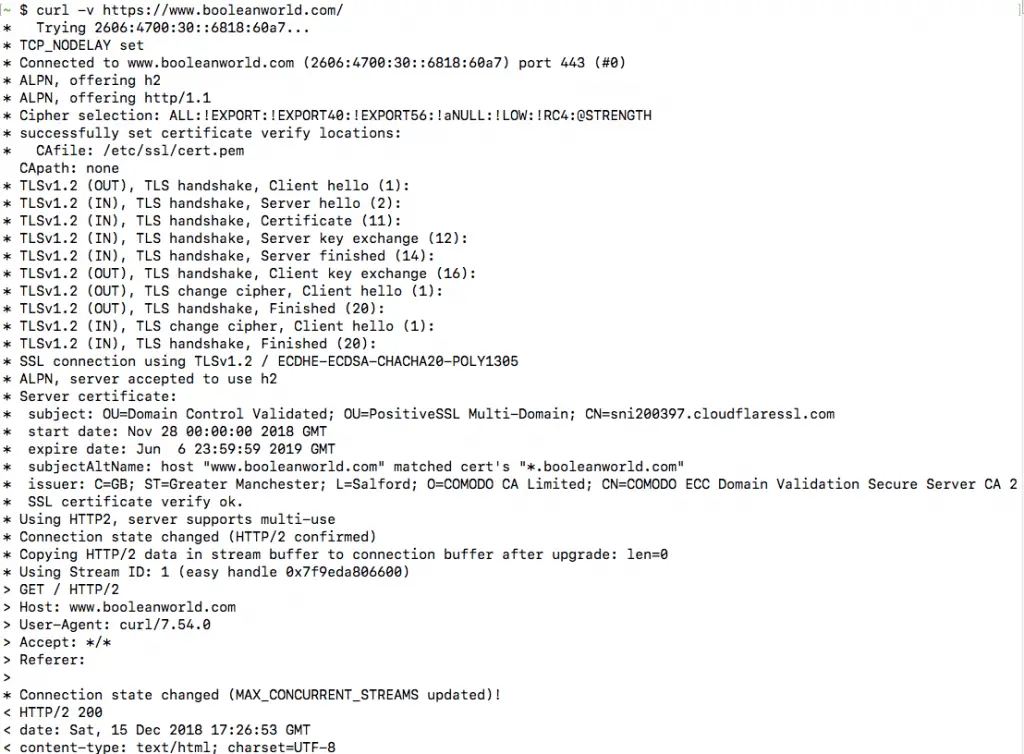

Viewing request headers and connection details

In the previous section, we have seen how you can view HTTP response headers using cURL. However, sometimes you may want to view more details about a request, such as the request headers sent and the connection process. cURL offers the -v flag (called “verbose mode”) for this purpose, and it can be used as follows:

curl -v https://www.booleanworld.com/

The output contains request data (marked with >), response headers (marked with <) and other details about the request, such as the IP used and the SSL handshake process (marked with *). The response body is also available below this information. (However, this is not visible in the screenshot below).

Most often, we aren’t interested in the response body. You can simply hide it by “saving” the output to the null device, which is /dev/null on Linux and MacOS and NUL on Windows:

curl -vo /dev/null https://www.booleanworld.com/ # Linux/MacOS curl -vo NUL https://www.booleanworld.com/ # Windows

Silencing errors

Previously, we have seen that cURL displays a progress bar when you save the output to a file. Unfortunately, the progress bar might not be useful in all circumstances. As an example, if you hide the output with -vo /dev/null, a progress bar appears which is not at all useful.

You can hide all these extra outputs by using the -s header. If we continue with our previous example but hide the progress bar, then the commands would be:

curl -svo /dev/null https://www.booleanworld.com/ # Linux/MacOS curl -svo NUL https://www.booleanworld.com/ # Windows

The -s option is a bit aggressive, though, since it even hides error messages. For your use case, if you want to hide the progress bar, but still view any errors, you can combine the -S option.

So, if you are trying to save cURL output to a file but simply want to hide the progress bar, you can use:

curl -sSvo file.html https://www.booleanworld.com/

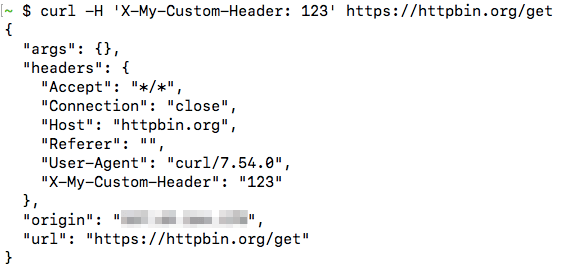

When testing APIs, you may need to set custom headers on the HTTP request. cURL has the -H option which you can use for this purpose. If you want to send the custom header X-My-Custom-Header with the value of 123 to https://httpbin.org/get, you should run:

curl -H 'X-My-Custom-Header: 123' https://httpbin.org/get

(httpbin.org is a very useful website that allows you to view details of the HTTP request that you sent to it.)

The data returned by the URL shows that this header was indeed set:

You can also override any default headers sent by cURL such as the “User-Agent” or “Host” headers. The HTTP client (in our case, cURL) sends the “User-Agent” header to tell the server about the type and version of the client used. Also, the client uses the “Host” header to tell the server about the site it should serve. This header is needed because a web server can host multiple websites at a single IP address.

Also, if you want to set multiple headers, you can simply repeat the -H option as required.

curl -H 'User-Agent: Mozilla/5.0' -H 'Host: www.google.com' ...

However, cURL does have certain shortcuts for frequently used flags. You can set the “User-Agent” header with the -A option:

curl -A Mozilla/5.0 http://httpbin.org/get

The “Referer” header is used to tell the server the location from which they were referred to by the previous site. It is typically sent by browsers when requesting Javascript or images linked to a page, or when following redirects. If you want to set a “Referer” header, you can use the -e flag:

curl -e http://www.google.com/ http://httpbin.org/get

Otherwise, if you are following a set of redirects, you can simply use -e ';auto' and cURL will take care of setting the redirects by itself.

Making POST requests with cURL

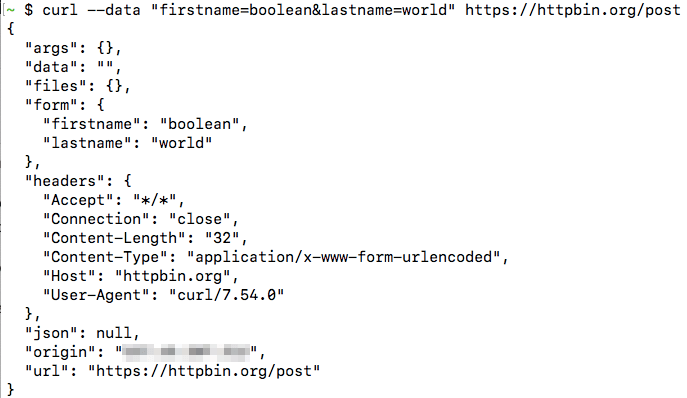

By default, cURL sends GET requests, but you can also use it to send POST requests with the -d or --data option. All the fields must be given as key=value pairs separated by the ampersand (&) character. As an example, you can make a POST request to httpbin.org with some parameters:

curl --data "firstname=boolean&lastname=world" https://httpbin.org/post

From the output, you can easily tell that we posted two parameters (this appears under the “form” key):

Any special characters such as @, %, = or spaces in the value should be URL-encoded manually. So, if you wanted to submit a parameter “email” with the value “[email protected]”, you would use:

curl --data "email=test%40example.com" https://httpbin.org/post

Alternatively, you can just use --data-urlencode to handle this for you. If you wanted to submit two parameters, email and name, this is how you should use the option:

curl --data-urlencode "[email protected]" --data-urlencode "name=Boolean World" https://httpbin.org/post

If the --data parameter is too big to type on the terminal, you can save it to a file and then submit it using @, like so:

curl --data @params.txt example.com

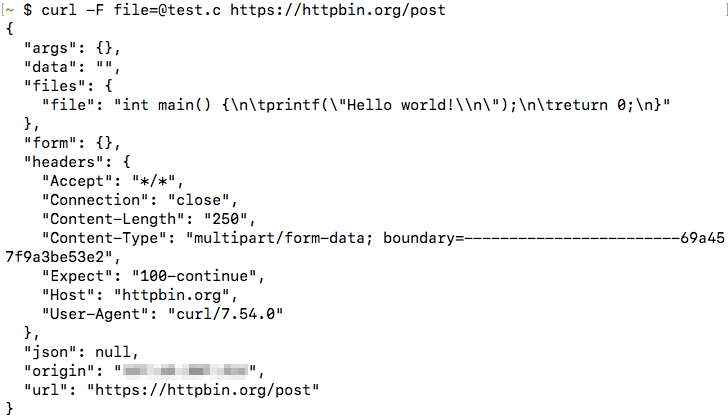

So far, we have seen how you can make POST requests using cURL. If you want to upload files using a POST request, you can use the -F (“form”) option. Here, we will submit the file test.c, under the parameter name file:

curl -F [email protected] https://httpbin.org/post

This shows the content of the file, showing that it was submitted successfully:

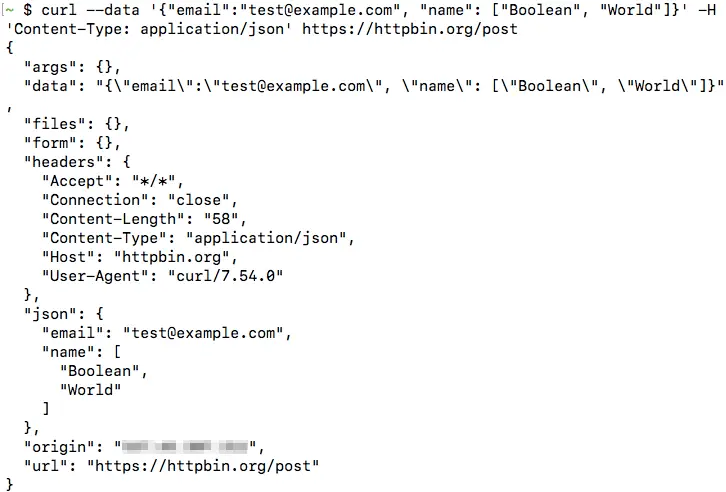

Submitting JSON data with cURL

In the previous section, we have seen how can submit POST requests using cURL. You can also submit JSON data using the --data option. However, most servers expect to receive a POST request with key-value pairs, similar to the ones we have discussed previously. So, you need to add an additional header called ‘Content-Type: application/json’ so that the server understands it’s dealing with JSON data and handles it appropriately. Also, you don’t need to URL-encode data when submitting JSON.

So if you had the following JSON data and want to make a POST request to https://httpbin.org/post:

{

"email": "[email protected]",

"name": ["Boolean", "World"]

}

Then, you can submit the data with:

curl --data '{"email":"[email protected]", "name": ["Boolean", "World"]}' -H 'Content-Type: application/json' https://httpbin.org/post

In this case, you can see the data appear under the json value in the httpbin.org output:

You can also save the JSON file, and submit it in the same way as we did previously:

curl --data @data.json https://httpbin.org/post

Changing the request method

Previously, we have seen how you can send POST requests with cURL. Sometimes, you may need to send a POST request with no data at all. In that case, you can simply change the request method to POST with the -X option, like so:

curl -X POST https://httpbin.org/post

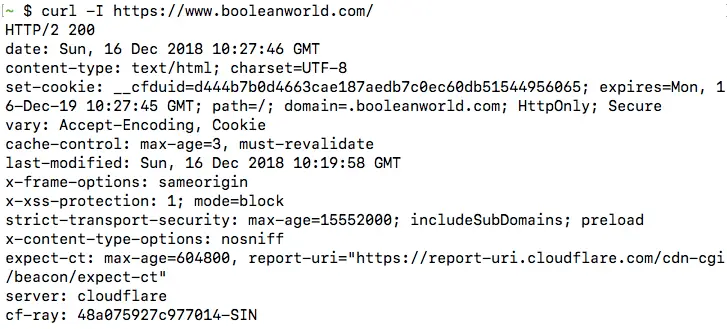

You can also change the request method to anything else, such as PUT, DELETE or PATCH. One notable exception is the HEAD method, which cannot be set with the -X option. The HEAD method is used to check if a document is present on the server, but without downloading the document. To use the HEAD method, use the -I option:

curl -I https://www.booleanworld.com/

When you make a HEAD request, cURL displays all the request headers by default. Servers do not send any content when they receive a HEAD request, so there is nothing after the headers:

Replicating browser requests with cURL

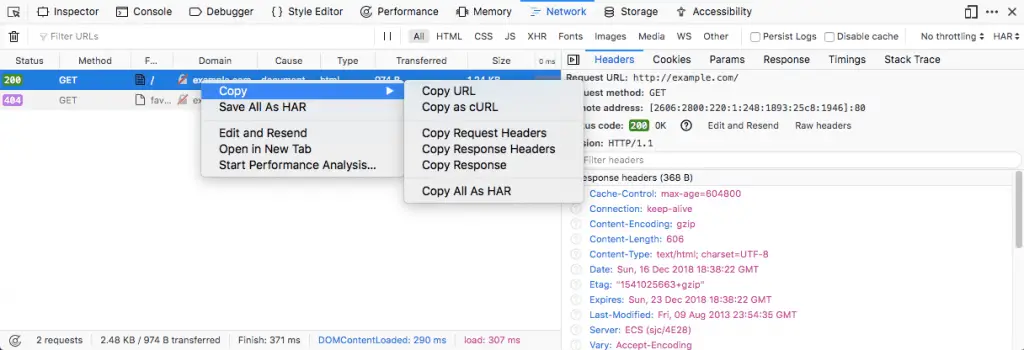

If you want to replicate a request made through your browser through cURL, you can use the Chrome, Firefox and Safari developer tools to get a cURL command to do so.

The steps involved are the same for all platforms and browsers:

- Open developer tools in Firefox/Chrome (typically F12 on Windows/Linux and Cmd+Shift+I on a Mac)

- Go to the network tab

- Select the request from the list, right click it and select “Copy as cURL”

The copied command contains all the headers, request methods, cookies etc. needed to replicate the exact same request. You can paste the command in your terminal to run it.

Making cURL fail on HTTP errors

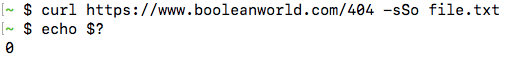

Interestingly, cURL doesn’t differentiate between a successful HTTP request (2xx) and a failed HTTP request (4xx/5xx). So, it always returns an exit status of 0 as long as there was no problem connecting to the site. This makes it difficult to write shell scripts because there is no way to check if the file could be downloaded successfully.

You can check this by making a request manually:

curl https://www.booleanworld.com/404 -sSo file.txt

You can see that curl doesn’t print any errors, and the exit status is also zero:

If you want to consider these HTTP errors as well, you can use the -f option, like so:

curl https://www.booleanworld.com/404 -fsSo file.txt

Now, you can see that cURL prints an error and also sets the status code to 22 to inform that an error occured:

Making authenticated requests with cURL

Some webpages and APIs require authentication with an username and password. There are two ways to do this. You can mention the username and password with the -u option:

curl -u boolean:world https://example.com/

Alternatively, you can simply add it to the URL itself, with the <username>:<password>@<host> syntax, as shown:

curl https://boolean:[email protected]/

In both of these methods, curl makes a “Basic” authentication with the server.

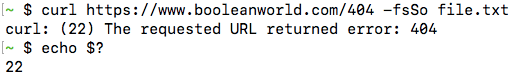

Testing protocol support with cURL

Due to the wide range of protocols supported by cURL, you can even use it to test protocol support. If you want to check if a site supports a certain version of SSL, you can use the --sslv<version> or --tlsv<version> flags. For example, if you want to check if a site supports TLS v1.2, you can use:

curl -v --tlsv1.2 https://www.booleanworld.com/

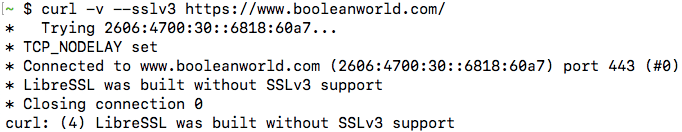

The request takes place normally, which means that the site supports TLSv1.2. Now, let us check if the site supports SSL v3:

curl -v --sslv3 https://www.booleanworld.com/

This command throws a handshake_failed error, because the server doesn’t support this version of SSL.

Please note that, depending on your system and the library version/configuration, some of these version options may not work. The above output was taken from Ubuntu 16.04’s cURL. However, if you try this with cURL in MacOS 10.14, it gives an error:

You can also test for HTTP protocol versions in the same way, by using the flags --http1.0, --http1.1 or --http2.

Setting the Host header and cURL’s --resolve option

Previously, we have discussed about how a web server chooses to serve different websites to visitors depending upon the “Host” header. This can be very useful to check if your website has virtual hosting configured correctly, by changing the “Host” header. As an example, say you have a local server at 192.168.0.1 with two websites configured, namely example1.com and example2.com. Now, you can test if everything is configured correctly by setting the Host header and checking if the correct contents are served:

curl -H 'Host: example1.com' http://192.168.0.1/ curl -H 'Host: example1.com' http://192.168.0.1/

Unfortunately, this doesn’t work so well for websites using HTTPS. A single website may be configured to serve multiple websites, with each website using its own SSL/TLS certificate. Since SSL/TLS takes place at a lower level than HTTP, this means clients such as cURL have to tell the server which website we’re trying to access at the SSL/TLS level, so that the server can pick the right certificate. By default, cURL always tells this to the server.

However, if you want to send a request to a specific IP like the above example, the server may pick a wrong certificate and that will cause the SSL/TLS verification to fail. The Host header only works at the HTTP level and not the SSL/TLS level.

To avoid the problem described above, you can use the --resolve flag. The resolve flag will send the request to the port and IP of your choice but will send the website name at both SSL/TLS and HTTP levels correctly.

Let us consider the previous example. If you were using HTTPS and wanted to send it to the local server 192.168.0.1, you can use:

curl https://example1.com/ --resolve example1.com:192.168.0.1:443

It also works well for HTTP. Suppose, if your HTTP server was serving on port 8080, you can use either the --resolve flag or set the Host header and the port manually, like so:

curl http://192.168.0.1:8080/ -H 'Host: example1.com:8080' curl http://example.com/ --resolve example1.com:192.168.0.1:8080

The two commands mentioned above are equivalent.

Resolve domains to IPv4 and IPv6 addresses

Sometimes, you may want to check if a site is reachable over both IPv4 or IPv6. You can force cURL to connect to either the IPv4 or over IPv6 version of your site by using the -4 or -6 flags.

Please bear in mind that a website can be reached over IPv4 and IPv6 only if:

- There are appropriate DNS records for the website that links it to IPv4 and IPv6 addresses.

- You have IPv4 and IPv6 connectivity on your system.

For example, if you want to check if you can reach the website icanhazip.com over IPv6, you can use:

curl -6 https://icanhazip.com/

If the site is reachable over HTTPS, you should get your own IPv6 address in the output. This website returns the public IP address of any client that connects to it. So, depending on the protocol used, it displays an IPv4 or IPv6 address.

You can also use the -v option along with -4 and -6 to get more details.

Disabling cURL’s certificate checks

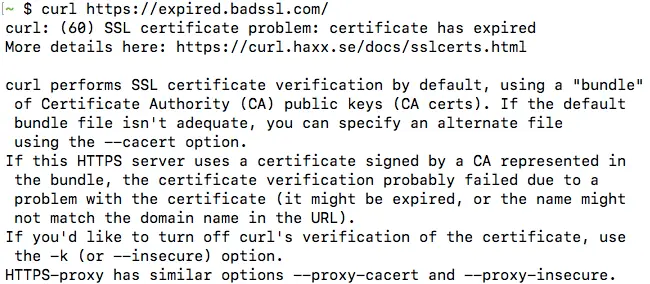

By default, cURL checks certificates when it connects over HTTPS. However, it is often useful to disable the certificate checking, when you are trying to make requests to sites using self-signed certificates, or if you need to test a site that has a misconfigured certificate.

To disable certificate checks, use the -k certificate. We will test this by making a request to expired.badssl.com, which is a website using an expired SSL certificate.

curl -k https://expired.badssl.com/

With the -k option, the certificate checks are ignored. So, cURL downloads the page and displays the request body successfully. On the other hand, if you didn’t use the -k option, you will get an error, similar to the one below:

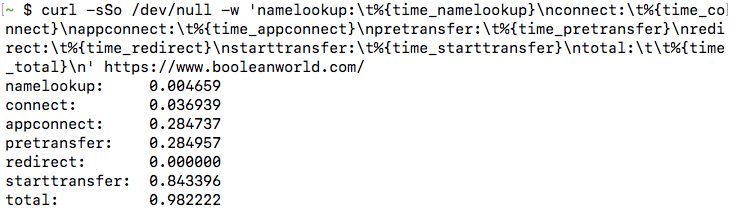

Troubleshooting website issues with “cURL timing breakdown”

You may run into situations where a website is very slow for you, and you would like to dig deeper into the issue. You can make cURL display details of the request, such as the time taken for DNS resolution, establishing a connection etc. with the -w option. This is often called as a cURL “timing breakdown”.

As an example, if you want to see these details for connecting to https://www.booleanworld.com/, run:

curl https://www.booleanworld.com/ -sSo /dev/null -w 'namelookup:t%{time_namelookup}nconnect:t%{time_connect}nappconnect:t%{time_appconnect}npretransfer:t%{time_pretransfer}nredirect:t%{time_redirect}nstarttransfer:t%{time_starttransfer}ntotal:tt%{time_total}n'

(If you are running this from a Windows system, change the /dev/null to NUL).

You will get some output similar to this:

Each of these values is in seconds, and here is what each value represents:

- namelookup — The time required for DNS resolution.

- connect — The time required to establish the TCP connection.

- appconnect — This is the time taken to establish connections for any layers between TCP and the application layer, such as SSL/TLS. In our case, the application layer is HTTP. Also, if there is no such intermediate layer (such as when there is a direct HTTP request), this time will always be 0.

- pretransfer — This is the time taken from the start to when the transfer of the file is just about to begin.

- redirect — This is the total time taken to process any redirects.

- starttransfer — Time it took from the start to when the first byte is about to be transferred.

- total — The total time taken for cURL to complete the entire process.

As an example, say, you are facing delays connecting to a website and you notice the “namelookup” value was too high. As this indicates a problem with your ISP’s DNS server, you may start looking into why the DNS lookup is so slow, and switch to another DNS server if needed.

cURL configuration files

Sometimes, you may want to make all cURL requests use the same options. Passing these options by hand isn’t a feasible solution, so cURL allows you to specify options in a configuration file.

The default configuration file is located in ~/.curlrc in Linux/MacOS and %appdata%_curlrc in Windows. Inside this file, you can specify any options that you need, such as:

# Always use IPv4 -4 # Always show verbose output -v # When following a redirect, automatically set the previous URL as referer. referer = ";auto" # Wait 60 seconds before timing out. connect-timeout = 60

After creating the above file, try making a request with curl example.com. You will find that these options have taken effect.

If you want to use a custom configuration file instead of the default one, then you can use -K option to point curl to your configuration file. As an example, if you have a configuration file called config.txt, then you can use it with:

curl -K config.txt example.com

Conclusion

In this article, we have covered the most common uses of the cURL command. Of course, this article only scratches the surface and cURL can do a whole lot of other things. You can type man curl in your terminal or just visit this page to see the man page which lists all the options.

--abstract-unix-socket <path>Example:curl --abstract-unix-socket socketpath https://example.com

--alt-svc <file name>Example:curl --alt-svc svc.txt https://example.com

--anyauthExample: curl --anyauth --user me:pass https://example.com

-a, --appendExample:curl --upload-file local --append ftp://example.com/

--aws-sigv4 <provider1[:provider2[:region[:service]]]>Example: curl --aws-sigv4 "aws:amz:east-2:es" --user "key:secret" https://example.com

--basicExample: curl -u name:password --basic https://example.com

--cacert <file>Example: curl --cacert CA-file.txt https://example.com

--capath <dir>Example: curl --capath /local/directory https://example.com

--cert-statusExample: curl --cert-status https://example.com

--cert-type <type>PEM (default), DER, ENG and P12.

Example: curl --cert-type ENG --cert file https://example.com

-E, --cert <certificate[:password]>Example: curl --cert certfile --key keyfile https://example.com

--ciphers <list of ciphers>Example: curl --ciphers ECDHE-ECDSA-AES256-CCM8 https://example.com

--compressed-sshExample: curl --compressed-ssh sftp://example.com/

--compressedExample: curl --compressed https://example.com

-K, --config <file>Example: curl --config file.txt https://example.com

--connect-timeout <fractional seconds>Example: curl --connect-timeout 30 https://example.com

--connect-to <HOST1:PORT1:HOST2:PORT2>Example: curl --connect-to example.com:443:example.net:8443 https://example.com

-C, --continue-at <offset>curl -C 400 https://example.com

-c, --cookie-jar <filename>curl -c store.txt https://example.com

-b, --cookie <data|filename>Example: curl -b cookiefile https://example.com

--create-dirs--output option.

Example: curl --create-dirs --output local/dir/file https://example.com

--create-file-mode <mode>Example: curl --create-file-mode 0777 -T localfile sftp://example.com/new

--crlfcurl --crlf -T file ftp://example.com/

--crlfile <file>curl --crlfile revoke.txt https://example.com

--curves <algorithm list>Example: curl --curves X25519 https://example.com

--data-ascii <data>-d, --data.

Example: curl --data-ascii @file https://example.com

--data-binary <data>curl --data-binary @filename https://example.com

--data-raw <data>-d, --data, but the @ character is not treated differently from the rest.

curl --data-raw "@[email protected]@" https://example.com

--data-urlencode <data>-d, --data, but perform URL encoding.

Example: curl --data-urlencode name=val https://example.com

-d, --data <data> Example: curl -d "name=curl" https://example.com

--delegation <LEVEL> Example: curl --delegation "always" https://example.com

--digest Example: curl -u name:password --digest https://example.com

--disable-eprt Example: curl --disable-eprt ftp://example.com/

--disable-epsv Example: curl --disable-epsv ftp://example.com/

-q, --disablecurl -q https://example.com

--disallow-username-in-url Example: curl --disallow-username-in-url https://example.com

--dns-interface <interface> Example: curl --dns-interface eth0 https://example.com

--dns-ipv4-addr <address> Example: curl --dns-ipv4-addr 10.1.2.3 https://example.com

--dns-ipv6-addr <address> Example: curl --dns-ipv6-addr 2a04:4e42::561 https://example.com

--dns-servers <addresses> Example: curl --dns-servers 192.168.0.1,192.168.0.2 https://example.com

--doh-cert-status--cert-status for DNS-over-HTTPS.

Example: curl --doh-cert-status --doh-url https://doh.server https://example.com

--doh-insecure-k, --insecure for DoH.

Example: curl --doh-insecure --doh-url https://doh.server https://example.com

--doh-url <URL> Example: curl --doh-url https://doh.server https://example.com

-D, --dump-header <filename> Example: curl --dump-header store.txt https://example.com

--egd-file <file> Example: curl --egd-file /path/here https://example.com

--engine <name> Example: curl --engine flavor https://example.com

--etag-compare <file> Example: curl --etag-compare etag.txt https://example.com

--etag-save <file> Example: curl --etag-save etag.txt https://example.com

--expect100-timeout <seconds> Example: curl --expect100-timeout 2.5 -T file https://example.com

--fail-early Example: curl --fail-early https://example.com https://two.example

--fail-with-body Example: curl --fail-with-body https://example.com

-f, --fail Example: curl --fail https://example.com

--false-start Example: curl --false-start https://example.com

--form-string <name=string>-F, --form, but the value strings are processed literally.

Example: curl --form-string "data" https://example.com

-F, --form <name=content>@ sign forces the content to be a file. The < sign extracts only the content part of the file.

Example: curl --form "name=curl" --form "[email protected]" https://example.com

--ftp-account <data> Example: curl --ftp-account "account_data" ftp://example.com/

--ftp-alternative-to-user <command> Example: curl --ftp-alternative-to-user "U53r" ftp://example.com

--ftp-create-dirs Example: curl --ftp-create-dirs -T file ftp://example.com/dirs/one/file

--ftp-method <method>multicwd, nocwd, and singlecwd.

Example: curl --ftp-method multicwd ftp://example.com/dir1/dir2/file

--ftp-pasv Example: curl --ftp-pasv ftp://example.com/

-P, --ftp-port <address> Example: curl -P eth0 ftp:/example.com

--ftp-pret Example: curl --ftp-pret ftp://example.com/

--ftp-skip-pasv-ip Example: curl --ftp-skip-pasv-ip ftp://example.com/

--ftp-ssl-ccc-mode <active/passive> Example: curl --ftp-ssl-ccc-mode active --ftp-ssl-ccc ftps://example.com/

--ftp-ssl-ccc Example: curl --ftp-ssl-ccc ftps://example.com/

--ftp-ssl-control Example: curl --ftp-ssl-control ftp://example.com

-G, --get Example: curl --get -d "tool=curl" -d "age=old" https://example.com

-g, --globoff Example: curl -g "https://example.com/{[]}}}}"

--happy-eyeballs-timeout-ms <milliseconds> Example: curl --happy-eyeballs-timeout-ms 500 https://example.com

--haproxy-protocol Example: curl --haproxy-protocol https://example.com

-I, --head Example: curl -I https://example.com

-H, --header <header/@file> Example: curl -H "X-First-Name: Joe" https://example.com

-h, --help <category>all lists all the available options.

Example: curl --help all

--hostpubmd5 <md5> Example: curl --hostpubmd5 e5c1c49020640a5ab0f2034854c321a8 sftp://example.com/

--hsts <file name> Example: curl --hsts cache.txt https://example.com

--http0.9 Example: curl --http0.9 https://example.com

-0, --http1.0 Example: curl --http1.0 https://example.com

--http1.1 Example: curl --http1.1 https://example.com

--http2-prior-knowledge Example: curl --http2-prior-knowledge https://example.com

--http2 Example: curl --http2 https://example.com

--http3 Example: curl --http3 https://example.com

--ignore-content-length Example: curl --ignore-content-length https://example.com

-i, --include Example: curl -i https://example.com

-k, --insecure Example: curl --insecure https://example.com

--interface <name> Example: curl --interface eth0 https://example.com

-4, --ipv4 Example: curl --ipv4 https://example.com

-6, --ipv6 Example: curl --ipv6 https://example.com

-j, --junk-session-cookies Example: curl --junk-session-cookies -b cookies.txt https://example.com

--keepalive-time <seconds> Example: curl --keepalive-time 30 https://example.com

--key-type <type>PEM (default), DER, and ENG.

Example: curl --key-type ENG --key here https://example.com

--key <key> Example: curl --cert certificate --key here https://example.com

--krb <level>clear, safe, confidential, and private (default).

Example: curl --krb clear ftp://example.com/

--libcurl <file> Example: curl --libcurl client.c https://example.com

--limit-rate <speed> Example: curl --limit-rate 100K https://example.com

-l, --list-only Example: curl --list-only ftp://example.com/dir/

--local-port <num/range> Example: curl --local-port 1000-3000 https://example.com

--location-trusted-L, --location, but enables you to send name and password to all redirections.

Example: curl --location-trusted -u user:pass https://example.com

-L, --location Example: curl -L https://example.com

--login-options <options> Example: curl --login-options 'AUTH=*' imap://example.com

--mail-auth <address> Example: curl --mail-auth [email protected] -T mail smtp://example.com/

--mail-from <address> Example: curl --mail-from [email protected] -T mail smtp://example.com/

--mail-rcpt-allowfails Example: curl --mail-rcpt-allowfails --mail-rcpt [email protected] smtp://example.com

--mail-rcpt <address> Example: curl --mail-rcpt [email protected] smtp://example.com

-M, --manual Example: curl --manual

--max-filesize <bytes>Example:

curl --max-filesize 500K https://example.com --max-redirs <num>--location is active.

Example: curl --max-redirs 3 --location https://example.com

-m, --max-time <fractional seconds> Example: curl --max-time 5.52 https://example.com

--metalink Example: curl --metalink file https://example.com

--negotiate Example: curl --negotiate -u : https://example.com

--netrc-file <filename>--n, --netrc, but allows you to specify the file to be used.

Example: curl --netrc-file netrc https://example.com

--netrc-optional--n, --netrc, but using netrc is optional.

Example: curl --netrc-optional https://example.com

-n, --netrc Example: curl --netrc https://example.com

-:, --next Example: curl -I https://example.com --next https://example.net/

--no-alpn Example: curl --no-alpn https://example.com

-N, --no-buffer Example: curl --no-buffer https://example.com

--no-keepalive Example: curl --no-keepalive https://example.com

--no-npn Example: curl --no-npn https://example.com

--no-progress-meter Example: curl --no-progress-meter -o store https://example.com

--no-sessionid Example: curl --no-sessionid https://example.com

--noproxy <no-proxy-list> Example: curl --noproxy "www.example" https://example.com

--ntlm-wb--ntlm, but also hands authentication to ntlmauth.

Example: curl --ntlm-wb -u user:password https://example.com

--ntlm Example: curl --ntlm -u user:password https://example.com

--oauth2-bearer <token> Example: curl --oauth2-bearer "mF_9.B5f-4.1JqM" https://example.com

--output-dir <dir> Example: curl --output-dir "tmp" -O https://example.com

-o, --output <file> Example: curl -o file https://example.com -o file2 https://example.net

--parallel-immediate Example: curl --parallel-immediate -Z https://example.com -o file1 https://example.com -o file2

--parallel-max <num> Example: curl --parallel-max 100 -Z https://example.com ftp://example.com/

-Z, --parallel Example: curl --parallel https://example.com -o file1 https://example.com -o file2

--pass <phrase> Example: curl --pass secret --key file https://example.com

--path-as-is/./ and /../ sequences.

Example: curl --path-as-is https://example.com/../../etc/passwd

-pinnedpubkey <hashes> Example: curl --pinnedpubkey keyfile https://example.com

--post301 Example: curl --post301 --location -d "data" https://example.com

--post302curl --post302 --location -d "data" https://example.com

--post303 Example: curl --post303 --location -d "data" https://example.com

--preproxy [protocol://]host[:port] Example: curl --preproxy socks5://proxy.example -x http://http.example https://example.com

-#, --progress-bar Example: curl -# -O https://example.com

--proto-default <protocol> Example: curl --proto-default https ftp.example.com

--proto-redir <protocols> Example: curl --proto-redir =http,https https://example.com

--proto <protocols> Example: curl --proto =http,https,sftp https://example.com

--proxy-anyauth Example: curl --proxy-anyauth --proxy-user user:passwd -x proxy https://example.com

--proxy-basic Example: curl --proxy-basic --proxy-user user:passwd -x proxy https://example.com

--proxy-cacert <file>--cacert for HTTPS proxies.

Example: curl --proxy-cacert CA-file.txt -x https://proxy https://example.com

--proxy-capath <dir>--capath for HTTPS proxies.

Example:curl --proxy-capath /local/directory -x https://proxy https://example.com

--proxy-cert-type <type>--cert-type for HTTPS proxies.

Example: curl --proxy-cert-type PEM --proxy-cert file -x https://proxy https://example.com

--proxy-cert <cert[:passwd]>-E, --cert for HTTPS proxies.

Example: curl --proxy-cert file -x https://proxy https://example.com

--proxy-ciphers <list>--ciphers for HTTPS proxies.

curl --proxy-ciphers ECDHE-ECDSA-AES256-CCM8 -x https://proxy https://example.com

--proxy-crlfile <file>--crlfile for HTTPS proxies.

Example: curl --proxy-crlfile rejects.txt -x https://proxy https://example.com

--proxy-digest Example: curl --proxy-digest --proxy-user user:passwd -x proxy https://example.com

--proxy-header <header/@file>-H, --header for proxy communication.

Example: curl --proxy-header "Host:" -x http://proxy https://example.com

--proxy-insecure-k, --insecure for HTTPS proxies.

Example: curl --proxy-insecure -x https://proxy https://example.com

--proxy-key-type <type>--key-type for HTTPS proxies.

Example: curl --proxy-key-type DER --proxy-key here -x https://proxy https://example.com

--proxy-key <key>--key for HTTPS proxies.

Example: curl --proxy-key here -x https://proxy https://example.com

--proxy-negotiate--negotiate for proxy communication.

Example: curl --proxy-negotiate --proxy-user user:passwd -x proxy https://example.com

--proxy-ntlm Example: curl --proxy-ntlm --proxy-user user:passwd -x http://proxy https://example.com

--proxy-pass <phrase>--pass for HTTPS proxies.

Example: curl --proxy-pass secret --proxy-key here -x https://proxy https://example.com

--proxy-pinnedpubkey <hashes> Example: curl --proxy-pinnedpubkey keyfile https://example.com

--proxy-service-name <name> Example: curl --proxy-service-name "shrubbery" -x proxy https://example.com

--proxy-ssl-allow-beast--ssl-allow-beast for HTTPS proxies.

Example: curl --proxy-ssl-allow-beast -x https://proxy https://example.com

--proxy-ssl-auto-client-cert--ssl-auto-client-cert for HTTPS proxies.

Example: curl --proxy-ssl-auto-client-cert -x https://proxy https://example.com

--proxy-tls13-ciphers <ciphersuite list> Example: curl --proxy-tls13-ciphers TLS_AES_128_GCM_SHA256 -x proxy https://example.com

--proxy-tlsauthtype <type>--tlsauthtype for HTTPS proxies.

Example: curl --proxy-tlsauthtype SRP -x https://proxy https://example.com

--proxy-tlspassword <string>--tlspassword for HTTPS proxies.

Example: curl --proxy-tlspassword passwd -x https://proxy https://example.com

--proxy-tlsuser <name>--tlsuser for HTTPS proxies.

Example: curl --proxy-tlsuser smith -x https://proxy https://example.com

--proxy-tlsv1-1, --tlsv1 for HTTPS proxies.

Example: curl --proxy-tlsv1 -x https://proxy https://example.com

-U, --proxy-user <user:password> Example: curl --proxy-user name:pwd -x proxy https://example.com

-x, --proxy [protocol://]host[:port] Example: curl --proxy http://proxy.example https://example.com

--proxy1.0 <host[:port]> Example: curl --proxy1.0 -x http://proxy https://example.com

-p, --proxytunnel Example: curl --proxytunnel -x http://proxy https://example.com

--pubkey <key> Example: curl --pubkey file.pub sftp://example.com/

-Q, --quote <command> Example: curl --quote "rm file" ftp://example.com/foo

--random-file <file> Example: curl --random-file rubbish https://example.com

-r, --range <range> Example: curl --range 40-80 https://example.com

--raw Example: curl --raw https://example.com

-e, --referer <URL> Example: curl --referer "https://test.example" https://example.com

-J, --remote-header-name Example: curl -OJ https://example.com/file

--remote-name-all-O, --remote-name option to all the URLs.

Example: curl --remote-name-all ftp://example.com/file1 ftp://example.com/file2

-O, --remote-name Example: curl -O https://example.com/filename

-R, --remote-time Example: curl --remote-time -o foo https://example.com

--request-target <path> Example: curl --request-target "*" -X OPTIONS https://example.com

-X, --request <command> Example: curl -X "DELETE" https://example.com

--resolve <[+]host:port:addr[,addr]...> Example: curl --resolve example.com:443:127.0.0.1 https://example.com

--retry-all-errors Example: curl --retry-all-errors https://example.com

--retry-connrefused--retry.

Example: curl --retry-connrefused --retry https://example.com

--retry-delay <seconds> Example: curl --retry-delay 5 --retry https://example.com

--retry-max-time <seconds>--retry attempts.

Example: curl --retry-max-time 30 --retry 10 https://example.com

--retry <num> Example: curl --retry 7 https://example.com

--sasl-authzid <identity> Example: curl --sasl-authzid zid imap://example.com/

--sasl-ir Example: curl --sasl-ir imap://example.com/

--service-name <name> Example: curl --service-name sockd/server https://example.com

-S, --show-error-s, --silent option enabled.

Example: curl --show-error --silent https://example.com

-s, --silent Example: curl -s https://example.com

--socks4 <host[:port]> Example: curl --socks4 hostname:4096 https://example.com

--socks4a <host[:port]> Example: curl --socks4a hostname:4096 https://example.com

--socks5-basic Example: curl --socks5-basic --socks5 hostname:4096 https://example.com

--socks5-gssapi-nec Example: curl --socks5-gssapi-nec --socks5 hostname:4096 https://example.com

--socks5-gssapi-service <name> Example: curl --socks5-gssapi-service sockd --socks5 hostname:4096 https://example.com

--socks5-gssapi Example: curl --socks5-gssapi --socks5 hostname:4096 https://example.com

--socks5-hostname <host[:port]> Example: curl --socks5-hostname proxy.example:7000 https://example.com

--socks5 <host[:port]> Example: curl --socks5 proxy.example:7000 https://example.com

-Y, --speed-limit <speed> Example: curl --speed-limit 300 --speed-time 10 https://example.com

-y, --speed-time <seconds> Example: curl --speed-limit 300 --speed-time 10 https://example.com

--ssl-allow-beast Example: curl --ssl-allow-beast https://example.com

--ssl-auto-client-cert Example: curl --ssl-auto-client-cert https://example.com

--ssl-no-revoke Example: curl --ssl-no-revoke https://example.com

--ssl-reqd Example: curl --ssl-reqd ftp://example.com

--ssl-revoke-best-effort Example: curl --ssl-revoke-best-effort https://example.com

--ssl Example: curl --ssl pop3://example.com/

-2, --sslv2 Example: curl --sslv2 https://example.com

-3, --sslv3 Example: curl --sslv3 https://example.com

--stderr <file>- symbol tells curl to output stderr to stdout.

Example: curl --stderr output.txt https://example.com

--styled-outputcurl --styled-output -I https://example.com

--suppress-connect-headers Example: curl --suppress-connect-headers --include -x proxy https://example.com

--tcp-fastopen Example: curl --tcp-fastopen https://example.com

--tcp-nodelay Example: curl --tcp-nodelay https://example.com

-t, --telnet-option <opt=val>TTYPE, XDISPLOC, and NEW_ENV options to the telnet protocol.

Example: curl -t TTYPE=vt100 telnet://example.com/

--tftp-blksize <value> Example: curl --tftp-blksize 1024 tftp://example.com/file

--tftp-no-options Example: curl --tftp-no-options tftp://192.168.0.1/

-z, --time-cond <time> Example: curl -z "Wed 01 Sep 2021 12:18:00" https://example.com

--tls-max <VERSION> Example: curl --tls-max 1.2 https://example.com

--tls13-ciphers <ciphersuite list> Example: curl --tls13-ciphers TLS_AES_128_GCM_SHA256 https://example.com

--tlsauthtype <type> Example: curl --tlsauthtype SRP https://example.com

--tlspassword <string> Example: curl --tlspassword pwd --tlsuser user https://example.com

--tlsuser <name> Example: curl --tlspassword pwd --tlsuser user https://example.com

--tlsv1.0 Example: curl --tlsv1.0 https://example.com

--tlsv1.1 Example: curl --tlsv1.1 https://example.com

--tlsv1.2 Example: curl --tlsv1.2 https://example.com

--tlsv1.3 Example: curl --tlsv1.3 https://example.com

-1, --tlsv1 Example: curl --tlsv1 https://example.com

--tr-encoding Example: curl --tr-encoding https://example.com

--trace-ascii <file> Example: curl --trace-ascii log.txt https://example.com

--trace-time Example: curl --trace-time --trace-ascii output https://example.com

--trace <file> Example: curl --trace log.txt https://example.com

--unix-socket <path> Example: curl --unix-socket socket-path https://example.com

-T, --upload-file <file> Example: curl -T "img[1-1000].png" ftp://ftp.example.com/

--url <url> Example: curl --url https://example.com

-B, --use-ascii Example: curl -B ftp://example.com/README

-A, --user-agent <name> Example: curl -A "Agent 007" https://example.com

-u, --user <user:password> Example: curl -u user:secret https://example.com

-v, --verboseExample: curl --verbose https://example.com

-V, --version Example: curl --version

-w, --write-out <format> Example: curl -w '%{http_code}n' https://example.com

--xattr Example: curl --xattr -o storage https://example.com

Я использую функции PHP curl для отправки данных на веб-сервер с моей локальной машины. Мой код выглядит следующим образом:

$c = curl_init();

curl_setopt($c, CURLOPT_URL, $url);

curl_setopt($c, CURLOPT_RETURNTRANSFER, true);

curl_setopt($c, CURLOPT_POST, true);

curl_setopt($c, CURLOPT_POSTFIELDS, $data);

$result = curl_exec($c);

if (curl_exec($c) === false) {

echo «ok»;

} else {

echo «error»;

}

curl_close($c);

К сожалению, я не могу поймать ни одной ошибки типа 404, 500 или сетевого уровня. Как же мне узнать, что данные не были размещены или получены с удаленного сервера?

Ответ 1

Вы можете использовать функцию curl_error(), чтобы определить, произошла ли какая-то ошибка. Например:

$ch = curl_init();

curl_setopt($ch, CURLOPT_URL, $your_url);

curl_setopt($ch, CURLOPT_FAILONERROR, true); // Требуется для того, чтобы коды ошибок HTTP сообщались через наш вызов к curl_error($ch)

//…

curl_exec($ch);

if (curl_errno($ch)) {

$error_msg = curl_error($ch);

}

curl_close($ch);

if (isset($error_msg)) {

// TODO — Обработать ошибку cURL соответствующим образом

}

Ответ 2

Если CURLOPT_FAILONERROR равно false, ошибки http не будут вызывать ошибок curl.

<?php

if (@$_GET[‘curl’]==»yes») {

header(‘HTTP/1.1 503 Service Temporarily Unavailable’);

} else {

$ch=curl_init($url = «http://».$_SERVER[‘SERVER_NAME’].$_SERVER[‘PHP_SELF’].»?curl=yes»);

curl_setopt($ch, CURLOPT_FAILONERROR, true);

$response=curl_exec($ch);

$http_status = curl_getinfo($ch, CURLINFO_HTTP_CODE);

$curl_errno= curl_errno($ch);

if ($http_status==503)

echo «HTTP Status == 503 <br/>»;

echo «Curl Errno returned $curl_errno <br/>»;

}

Ответ 3

Вы можете сгенерировать ошибку curl после его выполнения:

$url = ‘http://example.com’;

$ch = curl_init($url);

curl_setopt($ch, CURLOPT_RETURNTRANSFER, true);

$result = curl_exec($ch);

if(curl_errno($ch)){

echo ‘Request Error:’ . curl_error($ch);

}

И вот коды ошибок curl:

если кому-то нужна дополнительная информация об ошибках curl

<?php

$error_codes=array(

[1] => ‘CURLE_UNSUPPORTED_PROTOCOL’,

[2] => ‘CURLE_FAILED_INIT’,

[3] => ‘CURLE_URL_MALFORMAT’,

[4] => ‘CURLE_URL_MALFORMAT_USER’,

[5] => ‘CURLE_COULDNT_RESOLVE_PROXY’,

[6] => ‘CURLE_COULDNT_RESOLVE_HOST’,

[7] => ‘CURLE_COULDNT_CONNECT’,

[8] => ‘CURLE_FTP_WEIRD_SERVER_REPLY’,

[9] => ‘CURLE_REMOTE_ACCESS_DENIED’,

[11] => ‘CURLE_FTP_WEIRD_PASS_REPLY’,

[13] => ‘CURLE_FTP_WEIRD_PASV_REPLY’,

[14]=>’CURLE_FTP_WEIRD_227_FORMAT’,

[15] => ‘CURLE_FTP_CANT_GET_HOST’,

[17] => ‘CURLE_FTP_COULDNT_SET_TYPE’,

[18] => ‘CURLE_PARTIAL_FILE’,

[19] => ‘CURLE_FTP_COULDNT_RETR_FILE’,

[21] => ‘CURLE_QUOTE_ERROR’,

[22] => ‘CURLE_HTTP_RETURNED_ERROR’,

[23] => ‘CURLE_WRITE_ERROR’,

[25] => ‘CURLE_UPLOAD_FAILED’,

[26] => ‘CURLE_READ_ERROR’,

[27] => ‘CURLE_OUT_OF_MEMORY’,

[28] => ‘CURLE_OPERATION_TIMEDOUT’,

[30] => ‘CURLE_FTP_PORT_FAILED’,

[31] => ‘CURLE_FTP_COULDNT_USE_REST’,

[33] => ‘CURLE_RANGE_ERROR’,

[34] => ‘CURLE_HTTP_POST_ERROR’,

[35] => ‘CURLE_SSL_CONNECT_ERROR’,

[36] => ‘CURLE_BAD_DOWNLOAD_RESUME’,

[37] => ‘CURLE_FILE_COULDNT_READ_FILE’,

[38] => ‘CURLE_LDAP_CANNOT_BIND’,

[39] => ‘CURLE_LDAP_SEARCH_FAILED’,

[41] => ‘CURLE_FUNCTION_NOT_FOUND’,

[42] => ‘CURLE_ABORTED_BY_CALLBACK’,

[43] => ‘CURLE_BAD_FUNCTION_ARGUMENT’,

[45] => ‘CURLE_INTERFACE_FAILED’,

[47] => ‘CURLE_TOO_MANY_REDIRECTS’,

[48] => ‘CURLE_UNKNOWN_TELNET_OPTION’,

[49] => ‘CURLE_TELNET_OPTION_SYNTAX’,

[51] => ‘CURLE_PEER_FAILED_VERIFICATION’,

[52] => ‘CURLE_GOT_NOTHING’,

[53] => ‘CURLE_SSL_ENGINE_NOTFOUND’,

[54] => ‘CURLE_SSL_ENGINE_SETFAILED’,

[55] => ‘CURLE_SEND_ERROR’,

[56] => ‘CURLE_RECV_ERROR’,

[58] => ‘CURLE_SSL_CERTPROBLEM’,

[59] => ‘CURLE_SSL_CIPHER’,

[60] => ‘CURLE_SSL_CACERT’,

[61] => ‘CURLE_BAD_CONTENT_ENCODING’,

[62] => ‘CURLE_LDAP_INVALID_URL’,

[63] => ‘CURLE_FILESIZE_EXCEEDED’,

[64] => ‘CURLE_USE_SSL_FAILED’,

[65] => ‘CURLE_SEND_FAIL_REWIND’,

[66] => ‘CURLE_SSL_ENGINE_INITFAILED’,

[67] => ‘CURLE_LOGIN_DENIED’,

[68] => ‘CURLE_TFTP_NOTFOUND’,

[69] => ‘CURLE_TFTP_PERM’,

[70] => ‘CURLE_REMOTE_DISK_FULL’,

[71] => ‘CURLE_TFTP_ILLEGAL’,

[72] => ‘CURLE_TFTP_UNKNOWNID’,

[73] => ‘CURLE_REMOTE_FILE_EXISTS’,

[74] => ‘CURLE_TFTP_NOSUCHUSER’,

[75] => ‘CURLE_CONV_FAILED’,

[76] => ‘CURLE_CONV_REQD’,

[77] => ‘CURLE_SSL_CACERT_BADFILE’,

[78] => ‘CURLE_REMOTE_FILE_NOT_FOUND’,

[79] => ‘CURLE_SSH’,

[80] => ‘CURLE_SSL_SHUTDOWN_FAILED’,

[81] => ‘CURLE_AGAIN’,

[82] => ‘CURLE_SSL_CRL_BADFILE’,

[83] => ‘CURLE_SSL_ISSUER_ERROR’,

[84] => ‘CURLE_FTP_PRET_FAILED’,

[84] => ‘CURLE_FTP_PRET_FAILED’,

[85] => ‘CURLE_RTSP_CSEQ_ERROR’,

[86] => ‘CURLE_RTSP_SESSION_ERROR’,

[87] => ‘CURLE_FTP_BAD_FILE_LIST’,

[88] => ‘CURLE_CHUNK_FAILED’);

?>

Ответ 4

Поскольку вы заинтересованы в отлове ошибок, связанных с сетью, и ошибок HTTP, ниже приведен лучший подход:

function curl_error_test($url) {

$ch = curl_init();

curl_setopt($ch, CURLOPT_URL, $url);

curl_setopt($ch, CURLOPT_RETURNTRANSFER, true);

$responseBody = curl_exec($ch);

/*

* if curl_exec failed then

* $responseBody равно false

* curl_errno() возвращает ненулевое число

* curl_error() возвращает непустую строку

* Какой из них использовать — решать вам

*/

if ($responseBody === false) {

return «CURL Error: » . curl_error($ch);

}

$responseCode = curl_getinfo($ch, CURLINFO_HTTP_CODE);

/*

* 4xx коды состояния — ошибки клиента

* 5xx коды состояния — ошибки сервера

*/

if ($responseCode >= 400) {

return «HTTP Error: » . $responseCode;

}

return «Нет ошибки CURL или HTTP «;

}

Тесты:

curl_error_test(«http://expamle.com»); // Ошибка CURL : Невозможно определить хост : expamle.com

curl_error_test(«http://example.com/whatever»); // Ошибка HTTP: 404

curl_error_test(«http://example.com»); // Все в порядке с CURL или HTTP

Ответ 5

Еще один вариант кода:

$responseInfo = curl_getinfo($ch);

$httpCode = curl_getinfo($ch, CURLINFO_HTTP_CODE);

$header_size = curl_getinfo($ch, CURLINFO_HEADER_SIZE);

$body = substr($response, $header_size);

$result=array();

$result[‘httpCode’]=$httpCode;

$result[‘body’]=json_decode($body);

$result[‘responseInfo’]=$responseInfo;

print_r($httpCode);

print_r($result[‘body’]); exit;

curl_close($ch);

if($httpCode == 403) {

print_r(«Доступ запрещен»);

exit;

} else {

// другие ошибки

}

PHP’s cURL functions are extremely useful for sending HTTP requests.

Some examples of its usefulness.

- Retrieving data from an external API.

- Sending data to an external web service.

- Checking to see if a HTTP resource exists.

- Crawling / scraping web pages (logging into other websites with PHP).

Although you can easily request external content via the function file_get_contents, cURL is much more useful in the sense that it allows us to detect and handle certain HTTP errors.

Let’s take the following example.

$url = 'http://example.com'; $ch = curl_init($url); curl_setopt($ch, CURLOPT_RETURNTRANSFER, true); $result = curl_exec($ch); echo $result;

As you can see, we have setup a very simple GET request that returns the contents of a website called example.com.

Unfortunately, this code doesn’t take into account the fact that the cURL request could fail. As a result, the request might fail without providing us with any details about what happened.

Using curl_errno to detect cURL errors.

This is where the curl_errno function comes in handy.

The curl_errno function will return the number 0 (zero) if the request was successful. In other words, it will return a “falsey” value if no error occurs. This is because PHP sees 0 as a false value in a boolean context.

This allows us to figure out whether or not our cURL request resulted in an error.

Take a look at the following example.

$url = 'http://example.com';

$ch = curl_init($url);

curl_setopt($ch, CURLOPT_RETURNTRANSFER, true);

$result = curl_exec($ch);

if(curl_errno($ch)){

echo 'Request Error:' . curl_error($ch);

}

If an error occurs, then our script will print out the result of the curl_error function.

Throwing Exceptions.

In certain situations, you might want to throw an exception if a cURL request fails.

For this example, let us pretend that we have an object function called get.

/**

* Send a GET request to a URL.

*

* @param string $url

* @return string

* @throws Exception If cURL request fails.

*/

public function get($url){

$ch = curl_init($url);

curl_setopt($ch, CURLOPT_RETURNTRANSFER, true);

$result = curl_exec($ch);

if(curl_errno($ch)){

throw new Exception(curl_error($ch));

}

return $result;

}

As you can see, the function above will attempt to send a GET request to a given URL.

If all goes well, the function will return the contents of the URL.

However, if the request fails and a cURL error exists, PHP will throw an Exception.

This allows us to handle the request like so.

try{

$object->get('http://test.com');

} catch(Exception $e){

//do something with the exception you caught

}

In the above piece of code, we are using a TRY-CATCH block.

If we do not want our application to continue after a failed HTTP request, we can omit the TRY-CATCH block and let our Exception Handler deal with it.

Updated: 08/16/2021 by

The curl command transfers data to or from a network server, using one of the supported protocols (HTTP, HTTPS, FTP, FTPS, SCP, SFTP, TFTP, DICT, TELNET, LDAP or FILE). It is designed to work without user interaction, so it is ideal for use in a shell script.

The software offers proxy support, user authentication, FTP uploading, HTTP posting, SSL connections, cookies, file transfer resume, metalink, and other features.

Syntax

curl [options] [URL...]

Options

| -a, —append | (FTP/SFTP) When used in an FTP upload, this will tell curl to append to the target file instead of overwriting it. If the file doesn’t exist, it is created.

Note that this option is ignored by some SSH servers, including OpenSSH. |

| -A, —user-agent <agent string> | (HTTP) Specify the User-Agent string to send to the HTTP server. Some CGI fail if the agent string is not set to «Mozilla/4.0«. To encode blanks in the string, surround the string with single quote marks.

This value can also be set with the -H/—header option. If this option is set more than once, the last one will be the one that’s used. |

| —anyauth | (HTTP) Tells curl to figure out authentication method by itself, and use the most secure method the remote site claims it supports. This is done by first making a request and checking the response-headers, thus possibly inducing a network round-trip. This is used instead of setting a specific authentication method, which you can do with —basic, —digest, —ntlm, and —negotiate.

Note that using —anyauth is not recommended if you do uploads from stdin since it may require data to be sent twice and then the client must be able to rewind. If the need should arise when uploading from stdin, the upload operation fails. |

| -b, —cookie <name=data> | (HTTP) Pass the data to the HTTP server as a cookie. It is expected to be the data previously received from the server in a «Set-Cookie:» line. The data should be in the format «NAME1=VALUE1; NAME2=VALUE2«.

If no ‘=‘ (equals) character is used in the line, it is treated as a file name to use to read previously stored cookie lines from, which should be used in this session if they match. Using this method also activates the «cookie parser» which makes curl record incoming cookies too, which may be handy if you’re using this in combination with the —location option. The file format of the file to read cookies from should be plain HTTP headers or the Netscape/Mozilla cookie file format. NOTE: the file specified with -b/—cookie is only used as input. No cookies will be stored in the file. To store cookies, use the -c/—cookie-jar option, or you can save the HTTP headers to a file using -D/—dump-header. If this option is set more than once, the last occurrence will be the option that’s used. |

| -B, —use-ascii | (FTP/LDAP) Enable ASCII transfer. For FTP, this can also be enforced using an URL that ends with «;Type=A«. This option causes data sent to stdout to be in text mode for win32 systems.

If this option is used twice, the second one disables ASCII usage. |

| —basic | (HTTP) Tells curl to use HTTP Basic authentication. This is the default and this option is usually pointless, unless you use it to override a previously set option that sets a different authentication method (such as —ntlm, —digest and —negotiate). |