I want to mount the NFS share of a Zyxel NSA310s NAS. Showmount, called on the client machine, shows the share:

$ showmount 10.0.0.100 -e

Export list for 10.0.0.100:

/i-data/7fd943bf/nfs/zyxelNFS *

The client’s /etc/fstab contains the line:

10.0.0.100:/i-data/7fd943bf/nfs/zyxelNFS /media/nasNFS nfs rw 0 0

But mounting does not work:

sudo mount /media/nasNFS/ -v

mount.nfs: timeout set for Mon May 25 17:34:46 2015

mount.nfs: trying text-based options 'vers=4,addr=10.0.0.100,clientaddr=10.0.0.2'

mount.nfs: mount(2): Protocol not supported

mount.nfs: trying text-based options 'addr=10.0.0.100'

mount.nfs: prog 100003, trying vers=3, prot=6

mount.nfs: trying 10.0.0.100 prog 100003 vers 3 prot TCP port 2049

mount.nfs: portmap query retrying: RPC: Program/version mismatch

mount.nfs: prog 100003, trying vers=3, prot=17

mount.nfs: trying 10.0.0.100 prog 100003 vers 3 prot UDP port 2049

mount.nfs: portmap query failed: RPC: Program/version mismatch

mount.nfs: Protocol not supported

nfs-common is installed. What else can be missing?

asked May 25, 2015 at 15:44

9

To summarize the steps taken to get to the answer:

According to the output given the NFS server does not like NFSv4 nor UDP. To see the capabilities of the NFS server you can use rpcinfo 10.0.0.100 (you might extend the command to filter for nfs by: |egrep "service|nfs")

Apparently the only version supported by the server is version 2:

rpcinfo 10.0.0.100 |egrep "service|nfs"

program version netid address service owner

100003 2 udp 0.0.0.0.8.1 nfs unknown

100003 2 tcp 0.0.0.0.8.1 nfs unknown

Solution to mount the export is to use mount option vers=2 either on the commandline:

mount -o rw,vers=2 10.0.0.100:/i-data/7fd943bf/nfs/zyxelNFS /media/nasNFS

or by editing the /etc/fstab:

10.0.0.100:/i-data/7fd943bf/nfs/zyxelNFS /media/nasNFS nfs rw,vers=2 0 0

Another approach may be to change the NFS server to support version 3 (or even 4).

answered May 26, 2015 at 19:47

LambertLambert

12.3k2 gold badges24 silver badges34 bronze badges

1

I’m getting this error on Fedora 31. It turns out the drive is already mounted…

answered Jul 24, 2020 at 16:11

Swiss FrankSwiss Frank

3413 silver badges5 bronze badges

2

I ran into the «Protocol not supported» error as well. In my case the root cause turned out to be a subtle issue with a DNS reverse entry.

Background: I was using NFSv4 and had the following entries in /etc/exports:

/srv/nfs *.example.com(ro,fsid=root,insecure,no_subtree_check,async,root_squash)

/srv/nfs/data myhost.example.com(rw,sync,no_subtree_check)

Instead of the FQDN, running host 1.2.3.4 returned pointers to both «myhost.» and «myhost.example.com.». My NFS server seemed to look at the first PTR entry in the DNS response only which didn’t match the wildcard in /etc/exports and as a consequence it blocked NFSv4 from this host. So if you use rules based on host names in /etc/exports double-check that DNS reverse lookups work correctly for your clients.

answered May 3, 2020 at 0:48

Martin KonradMartin Konrad

2,0102 gold badges15 silver badges29 bronze badges

So I was getting that same error (protocol not supported) although my issue was a result of the NFS server not allowing my IP to mount (hbac rules). Had to login to the NFS Server and allow my IP.

When attempting to mount with -vvv, I saw various denied prior to the final «protocol not supported» that appeared without running the verbose options. .

answered Apr 1, 2022 at 15:56

IT_UserIT_User

3422 gold badges3 silver badges10 bronze badges

[root@sousvide mnt]# mount projects/

mount.nfs: Protocol not supported

The solution to this error in my case was adjusting the Freenas (BSD) settings «Authorized Hosts and IP Addresses» to allow the target machine in question access…

/etc/fstab had an entry like

x.x.x.x:/mnt/media/projects /mnt/projects nfs defaults,timeo=900,retrans=5,_netdev,noauto 0 0

answered Dec 26, 2021 at 3:44

KevinKevin

3982 silver badges12 bronze badges

Hola estaba usando cento v7 y tb me tiraba ese error del protcolo. lo solucione ejecutando estos comandos: [root@server ~]# yum install nfs-utils y luego systemctl start nfs y volvi a montar la carpeta. Era al parecer que las versiones que usa el protocolo nfs eran viejas y al actualizar se soluciono.

answered Sep 28, 2022 at 1:27

1

try to use this option

.... nfs rsize=8192,wsize=8192,timeo=14,intr 0 0

Anthon

77k42 gold badges159 silver badges217 bronze badges

answered May 26, 2015 at 2:20

2

This article or section is out of date.

Reason: Not all sections are up-to-date with NFSv4 changes. (Discuss in Talk:NFS/Troubleshooting)

Dedicated article for common problems and solutions.

Server-side issues

exportfs: /etc/exports:2: syntax error: bad option list

Make sure to delete all space from the option list in /etc/exports.

exportfs: requires fsid= for NFS export

As not all filesystems are stored on devices and not all filesystems have UUIDs (e.g. FUSE), it is sometimes necessary to explicitly tell NFS how to identify a filesystem. This is done with the fsid option:

/etc/exports

/srv/nfs client(rw,sync,crossmnt,fsid=0) /srv/nfs/music client(rw,sync,fsid=10)

Group/GID permissions issues

If NFS shares mount fine, and are fully accessible to the owner, but not to group members; check the number of groups that user belongs to. NFS has a limit of 16 on the number of groups a user can belong to. If you have users with more than this, you need to enable the manage-gids start-up flag on the NFS server:

/etc/nfs.conf

[mountd] manage-gids=y

«Permission denied» when trying to write files as root

- If you need to mount shares as root, and have full r/w access from the client, add the no_root_squash option to the export in

/etc/exports:

/var/cache/pacman/pkg 192.168.1.0/24(rw,no_subtree_check,no_root_squash)

- You must also add no_root_squash to the first line in

/etc/exports:

/ 192.168.1.0/24(rw,fsid=root,no_root_squash,no_subtree_check)

«RPC: Program not registered» when showmount -e command issued

Make sure that nfs-server.service and rpcbind.service are running on the server site, see systemd. If they are not, start and enable them.

Also make sure NFSv3 is enabled. showmount does not work with NFSv4-only servers.

UDP mounts not working

nfs-utils disabled serving NFS over UDP in version 2.2.1. Arch core updated to 2.3.1 on 21 Dec 2017 (skipping over 2.2.1.) If UDP stopped working then, add udp=y under [nfsd] in /etc/nfs.conf. Then restart nfs-server.service.

Timeout with big directories

Since nfs-utils version 1.0.x, every subdirectory is checked for permissions. This can lead to timeout on directories with a «large» number of subdirectories, even a few hundreds.

To disable this behaviour, add the option no_subtree_check to /etc/exports to the share directory.

Client-side issues

mount.nfs4: No such device

Make sure the nfsd kernel module has been loaded.

mount.nfs4: Invalid argument

Enable and start nfs-client.target and make sure the appropriate daemons (nfs-idmapd, rpc-gssd, etc) are running on the server.

mount.nfs4: Network is unreachable

Users making use of systemd-networkd or NetworkManager might notice NFS mounts are not mounted when booting.

Force the network to be completely configured by enabling systemd-networkd-wait-online.service or NetworkManager-wait-online.service. This may slow down the boot-process because fewer services run in parallel.

Tip: If the NFS server is only expecting IPV4 addresses, and you are using NetworkManager-wait-online.service, set ipv4.may-fail=no in your network profile to make sure that an IPV4 address is acquired before the NetworkManager-wait-online.service is reached.

mount.nfs4: an incorrect mount option was specified

This can happen if using the sec=krb5 option without nfs-client.target and/or rpc-gssd.service running. Starting and enabling those services should resolve the issue.

Unable to connect from OS X clients

When trying to connect from an OS X client, you will see that everything is ok in the server logs, but OS X will refuse to mount your NFS share. You can do one of two things to fix this:

- On the NFS server, add the

insecureoption to the share in/etc/exportsand re-runexportfs -r.

… OR …

- On the OS X client, add the

resvportoption to themountcommand line. You can also setresvportas a default client mount option in/etc/nfs.conf:

/etc/nfs.conf

nfs.client.mount.options = resvport

Using the default client mount option should also affect mounting the share from Finder via «Connect to Server…».

Unreliable connection from OS X clients

OS X’s NFS client is optimized for OS X Servers and might present some issues with Linux servers. If you are experiencing slow performance, frequent disconnects and problems with international characters edit the default mount options by adding the line nfs.client.mount.options = intr,locallocks,nfc to /etc/nfs.conf on your Mac client. More information about the mount options can be found in the OS X mount_nfs man page.

Intermittent client freezes when copying large files

If you copy large files from your client machine to the NFS server, the transfer speed is very fast, but after some seconds the speed drops and your client machine intermittently locks up completely for some time until the transfer is finished.

Try adding sync as a mount option on the client (e.g. in /etc/fstab) to fix this problem.

mount.nfs: Operation not permitted

NFSv4

If you use Kerberos (sec=krb5*), make sure the client and server clocks are correct. Using ntpd or systemd-timesyncd is recommended. Also, check that the canonical name for the server as resolved on the client (see Domain name resolution) matches the name in the server’s NFS principal.

NFSv3 and earlier

nfs-utils versions 1.2.1-2 or higher use NFSv4 by default, resulting in NFSv3 shares failing on upgrade. The problem can be solved by using either mount option 'vers=3' or 'nfsvers=3' on the command line:

# mount.nfs remote target directory -o ...,vers=3,... # mount.nfs remote target directory -o ...,nfsvers=3,...

or in /etc/fstab:

remote target directory nfs ...,vers=3,... 0 0 remote target directory nfs ...,nfsvers=3,... 0 0

mount.nfs: Protocol not supported

This error occurs when you include the export root in the path of the NFS source.

For example:

# mount SERVER:/srv/nfs4/media /mnt mount.nfs4: Protocol not supported

Use the relative path instead:

# mount SERVER:/media /mnt

Permissions issues

If you find that you cannot set the permissions on files properly, make sure the user/user group are both on the client and server.

If all your files are owned by nobody, and you are using NFSv4, on both the client and server, you should ensure that the nfs-idmapd.service has been started.

On some systems detecting the domain from FQDN minus hostname does not seem to work reliably. If files are still showing as nobody after the above changes, edit /etc/idmapd.conf, ensure that Domain is set to FQDN minus hostname. For example:

/etc/idmapd.conf

[General] Domain = domain.ext [Mapping] Nobody-User = nobody Nobody-Group = nobody [Translation] Method = nsswitch

Problems with Vagrant and synced_folders

If you get an error about unuspported protocol, you need to enable NFS over UDP on your host (or make Vagrant use NFS over TCP.) See #UDP mounts not working.

If Vagrant scripts are unable to mount folders over NFS, installing the net-tools package may solve the issue.

Performance issues

This NFS Howto page has some useful information regarding performance. Here are some further tips:

Diagnose the problem

- Htop should be your first port of call. The most obvious symptom will be a maxed-out CPU.

- Press F2, and under «Display options», enable «Detailed CPU time». Press F1 for an explanation of the colours used in the CPU bars. In particular, is the CPU spending most of its time responding to IRQs, or in Wait-IO (wio)?

Close-to-open/flush-on-close

Symptoms: Your clients are writing many small files. The server CPU is not maxed out, but there is very high wait-IO, and the server disk seems to be churning more than you might expect.

In order to ensure data consistency across clients, the NFS protocol requires that the client’s cache is flushed (all data is pushed to the server) whenever a file is closed after writing. Because the server is not allowed to buffer disk writes (if it crashes, the client will not realise the data was not written properly), the data is written to disk immediately before the client’s request is completed. When you are writing lots of small files from the client, this means that the server spends most of its time waiting for small files to be written to its disk, which can cause a significant reduction in throughput.

See this excellent article or the nfs manpage for more details on the close-to-open policy. There are several approaches to solving this problem:

The nocto mount option

Warning: The Linux kernel does not seem to honor this option properly. Files are still flushed when they are closed.

If all of the following conditions are satisfied:

- The export you have mounted on the client is only going to be used by the one client.

- It does not matter too much if a file written on one client does not immediately appear on other clients.

- It does not matter if after a client has written a file, and the client thinks the file has been saved, and then the client crashes, the file may be lost.

Use the nocto mount option, which will disable the close-to-open behavior.

The async export option

Does your situation match these conditions?

- It is important that when a file is closed after writing on one client, it is:

- Immediately visible on all the other clients.

- Safely stored on the server, even if the client crashes immediately after closing the file.

- It is not important to you that if the server crashes:

- You may lose the files that were most recently written by clients.

- When the server is restarted, the clients will believe their recent files exist, even though they were actually lost.

In this situation, you can use async instead of sync in the server’s /etc/exports file for those specific exports. See the exports manual page for details. In this case, it does not make sense to use the nocto mount option on the client.

Buffer cache size and MTU

Symptoms: High kernel or IRQ CPU usage, a very high packet count through the network card.

This is a trickier optimisation. Make sure this is definitely the problem before spending too much time on this. The default values are usually fine for most situations.

See this article for information about I/O buffering in NFS. Essentially, data is accumulated into buffers before being sent. The size of the buffer will affect the way data is transmitted over the network. The Maximum Transmission Unit (MTU) of the network equipment will also affect throughput, as the buffers need to be split into MTU-sized chunks before they are sent over the network. If your buffer size is too big, the kernel or hardware may spend too much time splitting it into MTU-sized chunks. If the buffer size is too small, there will be overhead involved in sending a very large number of small packets. You can use the rsize and wsize mount options on the client to alter the buffer cache size. To achieve the best throughput, you need to experiment and discover the best values for your setup.

It is possible to change the MTU of many network cards. If your clients are on a separate subnet (e.g. for a Beowulf cluster), it may be safe to configure all of the network cards to use a high MTU. This should be done in very-high-bandwidth environments.

See NFS#Performance tuning for more information.

Debugging

Using rpcdebug

Using rpcdebug is the easiest way to manipulate the kernel interfaces in place of echoing bitmasks to /proc.

| Option | Description |

|---|---|

| -c | Clear the given debug flags |

| -s | Set the given debug flags |

| -m module | Specify which module’s flags to set or clear. |

| -v | Increase the verbosity of rpcdebug’s output |

| -h | Print a help message and exit. When combined with the -v option, also prints the available debug flags. |

For the -m option, the available modules are:

| Module | Description |

|---|---|

| nfsd | The NFS server |

| nfs | The NFS client |

| nlm | The Network Lock Manager, in either an NFS client or server |

| rpc | The Remote Procedure Call module, in either an NFS client or server |

Examples:

rpcdebug -m rpc -s all # sets all debug flags for RPC rpcdebug -m rpc -c all # clears all debug flags for RPC rpcdebug -m nfsd -s all # sets all debug flags for NFS Server rpcdebug -m nfsd -c all # clears all debug flags for NFS Server

Once the flags are set you can tail the journal for the debug output, usually by running journalctl -fl as root or similar.

Using mountstats

The nfs-utils package contains the mountstats tool, which can retrieve a lot of statistics about NFS mounts, including average timings and packet size.

$ mountstats Stats for example:/tank mounted on /tank: NFS mount options: rw,sync,vers=4.2,rsize=524288,wsize=524288,namlen=255,acregmin=3,acregmax=60,acdirmin=30,acdirmax=60,soft,proto=tcp,port=0,timeo=15,retrans=2,sec=sys,clientaddr=xx.yy.zz.tt,local_lock=none NFS server capabilities: caps=0xfbffdf,wtmult=512,dtsize=32768,bsize=0,namlen=255 NFSv4 capability flags: bm0=0xfdffbfff,bm1=0x40f9be3e,bm2=0x803,acl=0x3,sessions,pnfs=notconfigured NFS security flavor: 1 pseudoflavor: 0 NFS byte counts: applications read 248542089 bytes via read(2) applications wrote 0 bytes via write(2) applications read 0 bytes via O_DIRECT read(2) applications wrote 0 bytes via O_DIRECT write(2) client read 171375125 bytes via NFS READ client wrote 0 bytes via NFS WRITE RPC statistics: 699 RPC requests sent, 699 RPC replies received (0 XIDs not found) average backlog queue length: 0 READ: 338 ops (48%) avg bytes sent per op: 216 avg bytes received per op: 507131 backlog wait: 0.005917 RTT: 548.736686 total execute time: 548.775148 (milliseconds) GETATTR: 115 ops (16%) avg bytes sent per op: 199 avg bytes received per op: 240 backlog wait: 0.008696 RTT: 15.756522 total execute time: 15.843478 (milliseconds) ACCESS: 93 ops (13%) avg bytes sent per op: 203 avg bytes received per op: 168 backlog wait: 0.010753 RTT: 2.967742 total execute time: 3.032258 (milliseconds) LOOKUP: 32 ops (4%) avg bytes sent per op: 220 avg bytes received per op: 274 backlog wait: 0.000000 RTT: 3.906250 total execute time: 3.968750 (milliseconds) OPEN_NOATTR: 25 ops (3%) avg bytes sent per op: 268 avg bytes received per op: 350 backlog wait: 0.000000 RTT: 2.320000 total execute time: 2.360000 (milliseconds) CLOSE: 24 ops (3%) avg bytes sent per op: 224 avg bytes received per op: 176 backlog wait: 0.000000 RTT: 30.250000 total execute time: 30.291667 (milliseconds) DELEGRETURN: 23 ops (3%) avg bytes sent per op: 220 avg bytes received per op: 160 backlog wait: 0.000000 RTT: 6.782609 total execute time: 6.826087 (milliseconds) READDIR: 4 ops (0%) avg bytes sent per op: 224 avg bytes received per op: 14372 backlog wait: 0.000000 RTT: 198.000000 total execute time: 198.250000 (milliseconds) SERVER_CAPS: 2 ops (0%) avg bytes sent per op: 172 avg bytes received per op: 164 backlog wait: 0.000000 RTT: 1.500000 total execute time: 1.500000 (milliseconds) FSINFO: 1 ops (0%) avg bytes sent per op: 172 avg bytes received per op: 164 backlog wait: 0.000000 RTT: 2.000000 total execute time: 2.000000 (milliseconds) PATHCONF: 1 ops (0%) avg bytes sent per op: 164 avg bytes received per op: 116 backlog wait: 0.000000 RTT: 1.000000 total execute time: 1.000000 (milliseconds)

Kernel Interfaces

A bitmask of the debug flags can be echoed into the interface to enable output to syslog; 0 is the default:

/proc/sys/sunrpc/nfsd_debug /proc/sys/sunrpc/nfs_debug /proc/sys/sunrpc/nlm_debug /proc/sys/sunrpc/rpc_debug

Sysctl controls are registered for these interfaces, so they can be used instead of echo:

sysctl -w sunrpc.rpc_debug=1023 sysctl -w sunrpc.rpc_debug=0 sysctl -w sunrpc.nfsd_debug=1023 sysctl -w sunrpc.nfsd_debug=0

At runtime the server holds information that can be examined:

grep . /proc/net/rpc/*/content cat /proc/fs/nfs/exports cat /proc/net/rpc/nfsd ls -l /proc/fs/nfsd

A rundown of /proc/net/rpc/nfsd (the userspace tool nfsstat pretty-prints this info):

* rc (reply cache): <hits> <misses> <nocache>

- hits: client it's retransmitting

- misses: a operation that requires caching

- nocache: a operation that no requires caching

* fh (filehandle): <stale> <total-lookups> <anonlookups> <dir-not-in-cache> <nodir-not-in-cache>

- stale: file handle errors

- total-lookups, anonlookups, dir-not-in-cache, nodir-not-in-cache

. always seem to be zeros

* io (input/output): <bytes-read> <bytes-written>

- bytes-read: bytes read directly from disk

- bytes-written: bytes written to disk

* th (threads): <threads> <fullcnt> <10%-20%> <20%-30%> ... <90%-100%> <100%>

DEPRECATED: All fields after <threads> are hard-coded to 0

- threads: number of nfsd threads

- fullcnt: number of times that the last 10% of threads are busy

- 10%-20%, 20%-30% ... 90%-100%: 10 numbers representing 10-20%, 20-30% to 100%

. Counts the number of times a given interval are busy

* ra (read-ahead): <cache-size> <10%> <20%> ... <100%> <not-found>

- cache-size: always the double of number threads

- 10%, 20% ... 100%: how deep it found what was looking for

- not-found: not found in the read-ahead cache

* net: <netcnt> <netudpcnt> <nettcpcnt> <nettcpconn>

- netcnt: counts every read

- netudpcnt: counts every UDP packet it receives

- nettcpcnt: counts every time it receives data from a TCP connection

- nettcpconn: count every TCP connection it receives

* rpc: <rpccnt> <rpcbadfmt+rpcbadauth+rpcbadclnt> <rpcbadfmt> <rpcbadauth> <rpcbadclnt>

- rpccnt: counts all rpc operations

- rpcbadfmt: counts if while processing a RPC it encounters the following errors:

. err_bad_dir, err_bad_rpc, err_bad_prog, err_bad_vers, err_bad_proc, err_bad

- rpcbadauth: bad authentication

. does not count if you try to mount from a machine that it's not in your exports file

- rpcbadclnt: unused

* procN (N = vers): <vs_nproc> <null> <getattr> <setattr> <lookup> <access> <readlink> <read> <write> <create> <mkdir> <symlink> <mknod> <remove> <rmdir> <rename> <link> <readdir> <readdirplus> <fsstat> <fsinfo> <pathconf> <commit>

- vs_nproc: number of procedures for NFS version

. v2: nfsproc.c, 18

. v3: nfs3proc.c, 22

- v4, nfs4proc.c, 2

- statistics: generated from NFS operations at runtime

* proc4ops: <ops> <x..y>

- ops: the definition of LAST_NFS4_OP, OP_RELEASE_LOCKOWNER = 39, plus 1 (so 40); defined in nfs4.h

- x..y: the array of nfs_opcount up to LAST_NFS4_OP (nfsdstats.nfs4_opcount[i])

NFSD debug flags

/usr/include/linux/nfsd/debug.h

/* * knfsd debug flags */ #define NFSDDBG_SOCK 0x0001 #define NFSDDBG_FH 0x0002 #define NFSDDBG_EXPORT 0x0004 #define NFSDDBG_SVC 0x0008 #define NFSDDBG_PROC 0x0010 #define NFSDDBG_FILEOP 0x0020 #define NFSDDBG_AUTH 0x0040 #define NFSDDBG_REPCACHE 0x0080 #define NFSDDBG_XDR 0x0100 #define NFSDDBG_LOCKD 0x0200 #define NFSDDBG_ALL 0x7FFF #define NFSDDBG_NOCHANGE 0xFFFF

NFS debug flags

/usr/include/linux/nfs_fs.h

/* * NFS debug flags */ #define NFSDBG_VFS 0x0001 #define NFSDBG_DIRCACHE 0x0002 #define NFSDBG_LOOKUPCACHE 0x0004 #define NFSDBG_PAGECACHE 0x0008 #define NFSDBG_PROC 0x0010 #define NFSDBG_XDR 0x0020 #define NFSDBG_FILE 0x0040 #define NFSDBG_ROOT 0x0080 #define NFSDBG_CALLBACK 0x0100 #define NFSDBG_CLIENT 0x0200 #define NFSDBG_MOUNT 0x0400 #define NFSDBG_FSCACHE 0x0800 #define NFSDBG_PNFS 0x1000 #define NFSDBG_PNFS_LD 0x2000 #define NFSDBG_STATE 0x4000 #define NFSDBG_ALL 0xFFFF

NLM debug flags

/usr/include/linux/lockd/debug.h

/* * Debug flags */ #define NLMDBG_SVC 0x0001 #define NLMDBG_CLIENT 0x0002 #define NLMDBG_CLNTLOCK 0x0004 #define NLMDBG_SVCLOCK 0x0008 #define NLMDBG_MONITOR 0x0010 #define NLMDBG_CLNTSUBS 0x0020 #define NLMDBG_SVCSUBS 0x0040 #define NLMDBG_HOSTCACHE 0x0080 #define NLMDBG_XDR 0x0100 #define NLMDBG_ALL 0x7fff

RPC debug flags

/usr/include/linux/sunrpc/debug.h

/* * RPC debug facilities */ #define RPCDBG_XPRT 0x0001 #define RPCDBG_CALL 0x0002 #define RPCDBG_DEBUG 0x0004 #define RPCDBG_NFS 0x0008 #define RPCDBG_AUTH 0x0010 #define RPCDBG_BIND 0x0020 #define RPCDBG_SCHED 0x0040 #define RPCDBG_TRANS 0x0080 #define RPCDBG_SVCXPRT 0x0100 #define RPCDBG_SVCDSP 0x0200 #define RPCDBG_MISC 0x0400 #define RPCDBG_CACHE 0x0800 #define RPCDBG_ALL 0x7fff

See also

- rpcdebug(8)

- http://utcc.utoronto.ca/~cks/space/blog/linux/NFSClientDebuggingBits

- http://www.novell.com/support/kb/doc.php?id=7011571

- http://stromberg.dnsalias.org/~strombrg/NFS-troubleshooting-2.html

- http://www.opensubscriber.com/message/nfs@lists.sourceforge.net/7833588.html

Содержание

- NFS/Troubleshooting

- Server-side issues

- exportfs: /etc/exports:2: syntax error: bad option list

- exportfs: requires fsid= for NFS export

- Group/GID permissions issues

- «Permission denied» when trying to write files as root

- «RPC: Program not registered» when showmount -e command issued

- UDP mounts not working

- Timeout with big directories

- Client-side issues

- mount.nfs4: No such device

- mount.nfs4: Invalid argument

- mount.nfs4: Network is unreachable

- mount.nfs4: an incorrect mount option was specified

- Unable to connect from OS X clients

- Unreliable connection from OS X clients

- Intermittent client freezes when copying large files

- mount.nfs: Operation not permitted

- NFSv4

- NFSv3 and earlier

- mount.nfs: Protocol not supported

- Permissions issues

- Problems with Vagrant and synced_folders

- Performance issues

- Diagnose the problem

- Close-to-open/flush-on-close

- The nocto mount option

- The async export option

- Buffer cache size and MTU

- Debugging

- Using rpcdebug

- Using mountstats

- Kernel Interfaces

- Thread: NFS mount gives «mount.nfs: Protocol not supported»

- NFS mount gives «mount.nfs: Protocol not supported»

- Re: NFS mount gives «mount.nfs: Protocol not supported»

- Re: NFS mount gives «mount.nfs: Protocol not supported»

- Re: NFS mount gives «mount.nfs: Protocol not supported»

- [SOLVED] mount.nfs: Protocol not supported

- Andrey G

- czechsys

- Andrey G

- Andrey G

- Gerhard W. Recher

- Andrey G

- Gerhard W. Recher

NFS/Troubleshooting

Dedicated article for common problems and solutions.

Server-side issues

exportfs: /etc/exports:2: syntax error: bad option list

Make sure to delete all space from the option list in /etc/exports .

exportfs: requires fsid= for NFS export

As not all filesystems are stored on devices and not all filesystems have UUIDs (e.g. FUSE), it is sometimes necessary to explicitly tell NFS how to identify a filesystem. This is done with the fsid option:

Group/GID permissions issues

If NFS shares mount fine, and are fully accessible to the owner, but not to group members; check the number of groups that user belongs to. NFS has a limit of 16 on the number of groups a user can belong to. If you have users with more than this, you need to enable the manage-gids start-up flag on the NFS server:

«Permission denied» when trying to write files as root

- If you need to mount shares as root, and have full r/w access from the client, add the no_root_squash option to the export in /etc/exports :

- You must also add no_root_squash to the first line in /etc/exports :

«RPC: Program not registered» when showmount -e command issued

Make sure that nfs-server.service and rpcbind.service are running on the server site, see systemd. If they are not, start and enable them.

Also make sure NFSv3 is enabled. showmount does not work with NFSv4-only servers.

UDP mounts not working

nfs-utils disabled serving NFS over UDP in version 2.2.1. Arch core updated to 2.3.1 on 21 Dec 2017 (skipping over 2.2.1.) If UDP stopped working then, add udp=y under [nfsd] in /etc/nfs.conf . Then restart nfs-server.service .

Timeout with big directories

Since nfs-utils version 1.0.x, every subdirectory is checked for permissions. This can lead to timeout on directories with a «large» number of subdirectories, even a few hundreds.

To disable this behaviour, add the option no_subtree_check to /etc/exports to the share directory.

Client-side issues

mount.nfs4: No such device

Make sure the nfsd kernel module has been loaded.

mount.nfs4: Invalid argument

Enable and start nfs-client.target and make sure the appropriate daemons (nfs-idmapd, rpc-gssd, etc) are running on the server.

mount.nfs4: Network is unreachable

Users making use of systemd-networkd or NetworkManager might notice NFS mounts are not mounted when booting.

Force the network to be completely configured by enabling systemd-networkd-wait-online.service or NetworkManager-wait-online.service . This may slow down the boot-process because fewer services run in parallel.

mount.nfs4: an incorrect mount option was specified

This can happen if using the sec=krb5 option without nfs-client.target and/or rpc-gssd.service running. Starting and enabling those services should resolve the issue.

Unable to connect from OS X clients

When trying to connect from an OS X client, you will see that everything is ok in the server logs, but OS X will refuse to mount your NFS share. You can do one of two things to fix this:

- On the NFS server, add the insecure option to the share in /etc/exports and re-run exportfs -r .

- On the OS X client, add the resvport option to the mount command line. You can also set resvport as a default client mount option in /etc/nfs.conf :

Using the default client mount option should also affect mounting the share from Finder via «Connect to Server. «.

Unreliable connection from OS X clients

OS X’s NFS client is optimized for OS X Servers and might present some issues with Linux servers. If you are experiencing slow performance, frequent disconnects and problems with international characters edit the default mount options by adding the line nfs.client.mount.options = intr,locallocks,nfc to /etc/nfs.conf on your Mac client. More information about the mount options can be found in the OS X mount_nfs man page.

Intermittent client freezes when copying large files

If you copy large files from your client machine to the NFS server, the transfer speed is very fast, but after some seconds the speed drops and your client machine intermittently locks up completely for some time until the transfer is finished.

Try adding sync as a mount option on the client (e.g. in /etc/fstab ) to fix this problem.

mount.nfs: Operation not permitted

NFSv4

If you use Kerberos ( sec=krb5* ), make sure the client and server clocks are correct. Using ntpd or systemd-timesyncd is recommended. Also, check that the canonical name for the server as resolved on the client (see Domain name resolution) matches the name in the server’s NFS principal.

NFSv3 and earlier

nfs-utils versions 1.2.1-2 or higher use NFSv4 by default, resulting in NFSv3 shares failing on upgrade. The problem can be solved by using either mount option ‘vers=3’ or ‘nfsvers=3’ on the command line:

mount.nfs: Protocol not supported

This error occurs when you include the export root in the path of the NFS source. For example:

Use the relative path instead:

Permissions issues

If you find that you cannot set the permissions on files properly, make sure the user/user group are both on the client and server.

If all your files are owned by nobody , and you are using NFSv4, on both the client and server, you should ensure that the nfs-idmapd.service has been started.

On some systems detecting the domain from FQDN minus hostname does not seem to work reliably. If files are still showing as nobody after the above changes, edit /etc/idmapd.conf , ensure that Domain is set to FQDN minus hostname . For example:

Problems with Vagrant and synced_folders

If you get an error about unuspported protocol, you need to enable NFS over UDP on your host (or make Vagrant use NFS over TCP.) See #UDP mounts not working.

If Vagrant scripts are unable to mount folders over NFS, installing the net-tools package may solve the issue.

Performance issues

This NFS Howto page has some useful information regarding performance. Here are some further tips:

Diagnose the problem

- Htop should be your first port of call. The most obvious symptom will be a maxed-out CPU.

- Press F2, and under «Display options», enable «Detailed CPU time». Press F1 for an explanation of the colours used in the CPU bars. In particular, is the CPU spending most of its time responding to IRQs, or in Wait-IO (wio)?

Close-to-open/flush-on-close

Symptoms: Your clients are writing many small files. The server CPU is not maxed out, but there is very high wait-IO, and the server disk seems to be churning more than you might expect.

In order to ensure data consistency across clients, the NFS protocol requires that the client’s cache is flushed (all data is pushed to the server) whenever a file is closed after writing. Because the server is not allowed to buffer disk writes (if it crashes, the client will not realise the data was not written properly), the data is written to disk immediately before the client’s request is completed. When you are writing lots of small files from the client, this means that the server spends most of its time waiting for small files to be written to its disk, which can cause a significant reduction in throughput.

See this excellent article or the nfs manpage for more details on the close-to-open policy. There are several approaches to solving this problem:

The nocto mount option

If all of the following conditions are satisfied:

- The export you have mounted on the client is only going to be used by the one client.

- It does not matter too much if a file written on one client does not immediately appear on other clients.

- It does not matter if after a client has written a file, and the client thinks the file has been saved, and then the client crashes, the file may be lost.

Use the nocto mount option, which will disable the close-to-open behavior.

The async export option

Does your situation match these conditions?

- It is important that when a file is closed after writing on one client, it is:

- Immediately visible on all the other clients.

- Safely stored on the server, even if the client crashes immediately after closing the file.

- It is not important to you that if the server crashes:

- You may lose the files that were most recently written by clients.

- When the server is restarted, the clients will believe their recent files exist, even though they were actually lost.

In this situation, you can use async instead of sync in the server’s /etc/exports file for those specific exports. See the exports manual page for details. In this case, it does not make sense to use the nocto mount option on the client.

Buffer cache size and MTU

Symptoms: High kernel or IRQ CPU usage, a very high packet count through the network card.

This is a trickier optimisation. Make sure this is definitely the problem before spending too much time on this. The default values are usually fine for most situations.

See this article for information about I/O buffering in NFS. Essentially, data is accumulated into buffers before being sent. The size of the buffer will affect the way data is transmitted over the network. The Maximum Transmission Unit (MTU) of the network equipment will also affect throughput, as the buffers need to be split into MTU-sized chunks before they are sent over the network. If your buffer size is too big, the kernel or hardware may spend too much time splitting it into MTU-sized chunks. If the buffer size is too small, there will be overhead involved in sending a very large number of small packets. You can use the rsize and wsize mount options on the client to alter the buffer cache size. To achieve the best throughput, you need to experiment and discover the best values for your setup.

It is possible to change the MTU of many network cards. If your clients are on a separate subnet (e.g. for a Beowulf cluster), it may be safe to configure all of the network cards to use a high MTU. This should be done in very-high-bandwidth environments.

See NFS#Performance tuning for more information.

Debugging

Using rpcdebug

Using rpcdebug is the easiest way to manipulate the kernel interfaces in place of echoing bitmasks to /proc.

| Option | Description |

|---|---|

| -c | Clear the given debug flags |

| -s | Set the given debug flags |

| -m module | Specify which module’s flags to set or clear. |

| -v | Increase the verbosity of rpcdebug’s output |

| -h | Print a help message and exit. When combined with the -v option, also prints the available debug flags. |

For the -m option, the available modules are:

| Module | Description |

|---|---|

| nfsd | The NFS server |

| nfs | The NFS client |

| nlm | The Network Lock Manager, in either an NFS client or server |

| rpc | The Remote Procedure Call module, in either an NFS client or server |

Once the flags are set you can tail the journal for the debug output, usually by running journalctl -fl as root or similar.

Using mountstats

The nfs-utils package contains the mountstats tool, which can retrieve a lot of statistics about NFS mounts, including average timings and packet size.

Kernel Interfaces

A bitmask of the debug flags can be echoed into the interface to enable output to syslog; 0 is the default:

Sysctl controls are registered for these interfaces, so they can be used instead of echo:

At runtime the server holds information that can be examined:

A rundown of /proc/net/rpc/nfsd (the userspace tool nfsstat pretty-prints this info):

Источник

Thread: NFS mount gives «mount.nfs: Protocol not supported»

Thread Tools

Display

NFS mount gives «mount.nfs: Protocol not supported»

Old machine, runnung on Ubuntu 13.04 can mount NFS server just fine.

New machine, running 13.10, using identical /etc/fstab (or command line mount) gives the result «mount.nfs: Protocol not supported»

mount -v gives on both machine:

Old machine, that works, continues to mount anyway. New does not.

Re: NFS mount gives «mount.nfs: Protocol not supported»

Did you install the nfs-common package? That’s a requirement to use the computer as an NFS client. It does not ship with Ubuntu by default.

If you ask for help, do not abandon your request. Please have the courtesy to check for responses and thank the people who helped you.

Re: NFS mount gives «mount.nfs: Protocol not supported»

Re: NFS mount gives «mount.nfs: Protocol not supported»

That’s a rather weird error. I presume you are exporting /home in lagret’s /etc/exports, and /mnt/lagret exists?

What are the mount options in /etc/exports? Do they include «insecure» which lets the client choose a source port > 1023? Also in the «text-based options» line it seems to be trying to use NFSv4, but the rest of the text talks about using NFSv3. Also are you trying to force a TCP connection?

Can we see both the entry in /etc/exports and in /etc/fstab? What does syslog report on the server?

Last edited by SeijiSensei; October 20th, 2013 at 09:50 PM .

If you ask for help, do not abandon your request. Please have the courtesy to check for responses and thank the people who helped you.

Источник

[SOLVED] mount.nfs: Protocol not supported

Andrey G

Active Member

pve4+1

libqb0: 1.0.1-1

pve-cluster: 4.0-52

qemu-server: 4.0-111

pve-firmware: 1.1-11

libpve-common-perl: 4.0-96

libpve-access-control: 4.0-23

libpve-storage-perl: 4.0-76

pve-libspice-server1: 0.12.8-2

vncterm: 1.3-2

pve-docs: 4.4-4

pve-qemu-kvm: 2.7.1-4

pve-container: 1.0-101

pve-firewall: 2.0-33

pve-ha-manager: 1.0-41

ksm-control-daemon: 1.2-1

glusterfs-client: 3.5.2-2+deb8u3

lxc-pve: 2.0.7-4

lxcfs: 2.0.6-pve1

criu: 1.6.0-1

novnc-pve: 0.5-9

smartmontools: 6.5+svn4324-1

czechsys

Well-Known Member

Andrey G

Active Member

Andrey G

Active Member

Gerhard W. Recher

Active Member

this is typically a outdated kernel . message loading nfs .

modinfo should read:

bpo90

glusterfs-client: 3.8.8-1

ksm-control-daemon: 1.2-2

libjs-extjs: 6.0.1-2

libpve-access-control: 5.0-8

libpve-apiclient-perl: 2.0-4

libpve-common-perl: 5.0-32

libpve-guest-common-perl: 2.0-16

libpve-http-server-perl: 2.0-9

libpve-storage-perl: 5.0-23

libqb0: 1.0.1-1

lvm2: 2.02.168-pve6

lxc-pve: 3.0.0-3

lxcfs: 3.0.0-1

novnc-pve: 0.6-4

proxmox-widget-toolkit: 1.0-18

pve-cluster: 5.0-27

pve-container: 2.0-23

pve-docs: 5.2-4

pve-firewall: 3.0-9

pve-firmware: 2.0-4

pve-ha-manager: 2.0-5

pve-i18n: 1.0-5

pve-libspice-server1: 0.12.8-3

pve-qemu-kvm: 2.11.1-5

pve-xtermjs: 1.0-5

qemu-server: 5.0-26

smartmontools: 6.5+svn4324-1

spiceterm: 3.0-5

vncterm: 1.5-3

zfsutils-linux: 0.7.9-pve1

bpo9

===================================

system(4 nodes): Supermicro 2028U-TN24R4T+

switch:mellanox sx1012

2 port Mellanox connect x3pro 56Gbit

4 port intel 10GigE

memory: 768 GBytes

CPU DUAL Intel(R) Xeon(R) CPU E5-2690 v4 @ 2.60GHz

ceph: 28 osds

24 Intel Nvme 2000GB Intel SSD DC P3520, 2,5″, PCIe 3.0 x4,

4 Intel Nvme 1,6TB Intel SSD DC P3700, 2,5″, U.2 PCIe 3.0

Andrey G

Active Member

Gerhard W. Recher

Active Member

strange i bet your modinfo would issue a error .

so to find out the module complaining «disagrees about version of symbol module_layout» . by dmesg

perhaps a «apt-get update && apt-get dist-upgrade » will solve your problem .

bpo90

glusterfs-client: 3.8.8-1

ksm-control-daemon: 1.2-2

libjs-extjs: 6.0.1-2

libpve-access-control: 5.0-8

libpve-apiclient-perl: 2.0-4

libpve-common-perl: 5.0-32

libpve-guest-common-perl: 2.0-16

libpve-http-server-perl: 2.0-9

libpve-storage-perl: 5.0-23

libqb0: 1.0.1-1

lvm2: 2.02.168-pve6

lxc-pve: 3.0.0-3

lxcfs: 3.0.0-1

novnc-pve: 0.6-4

proxmox-widget-toolkit: 1.0-18

pve-cluster: 5.0-27

pve-container: 2.0-23

pve-docs: 5.2-4

pve-firewall: 3.0-9

pve-firmware: 2.0-4

pve-ha-manager: 2.0-5

pve-i18n: 1.0-5

pve-libspice-server1: 0.12.8-3

pve-qemu-kvm: 2.11.1-5

pve-xtermjs: 1.0-5

qemu-server: 5.0-26

smartmontools: 6.5+svn4324-1

spiceterm: 3.0-5

vncterm: 1.5-3

zfsutils-linux: 0.7.9-pve1

bpo9

===================================

system(4 nodes): Supermicro 2028U-TN24R4T+

switch:mellanox sx1012

2 port Mellanox connect x3pro 56Gbit

4 port intel 10GigE

memory: 768 GBytes

CPU DUAL Intel(R) Xeon(R) CPU E5-2690 v4 @ 2.60GHz

ceph: 28 osds

24 Intel Nvme 2000GB Intel SSD DC P3520, 2,5″, PCIe 3.0 x4,

4 Intel Nvme 1,6TB Intel SSD DC P3700, 2,5″, U.2 PCIe 3.0

Источник

-

#1

Hello.

I try to connect my NAS folder to Proxmox as NFS share.

NAS: Thecus N2310. It supports sharing folder via NFS. It is configured via web, but command line (linux) is also available. (IP 192.168.10.3)

Proxmox:

proxmox-ve: 4.4-94 (running kernel: 4.4.35-1-pve)

pve-manager: 4.4-18 (running version: 4.4-18/ef2610e8)

pve-kernel-4.4.35-1-pve: 4.4.35-77

pve-kernel-4.4.21-1-pve: 4.4.21-71

pve-kernel-4.4.76-1-pve: 4.4.76-94

lvm2: 2.02.116-pve3

corosync-pve: 2.4.2-2~pve4+1

libqb0: 1.0.1-1

pve-cluster: 4.0-52

qemu-server: 4.0-111

pve-firmware: 1.1-11

libpve-common-perl: 4.0-96

libpve-access-control: 4.0-23

libpve-storage-perl: 4.0-76

pve-libspice-server1: 0.12.8-2

vncterm: 1.3-2

pve-docs: 4.4-4

pve-qemu-kvm: 2.7.1-4

pve-container: 1.0-101

pve-firewall: 2.0-33

pve-ha-manager: 1.0-41

ksm-control-daemon: 1.2-1

glusterfs-client: 3.5.2-2+deb8u3

lxc-pve: 2.0.7-4

lxcfs: 2.0.6-pve1

criu: 1.6.0-1

novnc-pve: 0.5-9

smartmontools: 6.5+svn4324-1~pve80

rpcinfo -p 192.168.10.3

program vers proto port service

100000 4 tcp 111 portmapper

100000 3 tcp 111 portmapper

100000 2 tcp 111 portmapper

100000 4 udp 111 portmapper

100000 3 udp 111 portmapper

100000 2 udp 111 portmapper

100021 1 udp 37725 nlockmgr

100021 3 udp 37725 nlockmgr

100021 4 udp 37725 nlockmgr

100021 1 tcp 51125 nlockmgr

100021 3 tcp 51125 nlockmgr

100021 4 tcp 51125 nlockmgr

100003 2 tcp 2049 nfs

100003 3 tcp 2049 nfs

100003 4 tcp 2049 nfs

100003 2 udp 2049 nfs

100003 3 udp 2049 nfs

100003 4 udp 2049 nfs

100005 1 udp 20048 mountd

100005 1 tcp 20048 mountd

100005 2 udp 20048 mountd

100005 2 tcp 20048 mountd

100005 3 udp 20048 mountd

100005 3 tcp 20048 mountd

100024 1 udp 38468 status

100024 1 tcp 42577 status

pvesm nfsscan 192.168.10.3

/raid0/data/_NAS_NFS_Exports_/test2 192.168.10.90

/raid0/data/_NAS_NFS_Exports_/010-anessi *

/raid0/data/_NAS_NFS_Exports_ *

When I try to add nas storage via web gui I receive mount error: mount.nfs: Protocol not supported (500).

When I try manually mount nfs, I get the same:

mount.nfs 192.168.10.3:/raid0/data/_NAS_NFS_Exports_/010-anessi /mnt/pve/nas -wv -o vers=3

mount.nfs: timeout set for Thu Sep 21 12:29:56 2017

mount.nfs: trying text-based options ‘vers=3,addr=192.168.10.3’

mount.nfs: prog 100003, trying vers=3, prot=6

mount.nfs: trying 192.168.10.3 prog 100003 vers 3 prot TCP port 2049

mount.nfs: prog 100005, trying vers=3, prot=17

mount.nfs: trying 192.168.10.3 prog 100005 vers 3 prot UDP port 20048

mount.nfs: mount(2): Protocol not supported

mount.nfs: Protocol not supported

But from Ubuntu host I mount this share successfully.

What I do wrong with Proxmox?

Last edited: Sep 21, 2017

-

#2

You are mounting as nfsv3. Check nfs server versions support on Thecus.

-

#3

You are mounting as nfsv3. Check nfs server versions support on Thecus.

It supports both 3 and 4. See rpcinfo spoiler.

-

#4

Found in /var/log/syslog

nfsv4: disagrees about version of symbol module_layout

same for nfsv3

any ideas?

-

#5

Found in /var/log/syslog

nfsv4: disagrees about version of symbol module_layout

same for nfsv3

any ideas?

Hi

this is typically a outdated kernel …. message loading nfs …

modinfo should read:

Code:

modinfo nfs

filename: /lib/modules/4.10.17-3-pve/kernel/fs/nfs/nfs.ko

license: GPL

author: Olaf Kirch <okir@monad.swb.de>

alias: nfs4

alias: fs-nfs4

alias: fs-nfs

srcversion: A4D41D66D76225578FFA720

depends: fscache,sunrpc,lockd

intree: Y

vermagic: 4.10.17-3-pve SMP mod_unload modversions

parm: callback_tcpport:portnr

parm: callback_nr_threads:Number of threads that will be assigned to the NFSv4 callback channels. (ushort)

parm: nfs_idmap_cache_timeout:int

parm: nfs4_disable_idmapping:Turn off NFSv4 idmapping when using 'sec=sys' (bool)

parm: max_session_slots:Maximum number of outstanding NFSv4.1 requests the client will negotiate (ushort)

parm: max_session_cb_slots:Maximum number of parallel NFSv4.1 callbacks the client will process for a given server (ushort)

parm: send_implementation_id:Send implementation ID with NFSv4.1 exchange_id (ushort)

parm: nfs4_unique_id:nfs_client_id4 uniquifier string (string)

parm: recover_lost_locks:If the server reports that a lock might be lost, try to recover it risking data corruption. (bool)

parm: enable_ino64:bool

parm: nfs_access_max_cachesize:NFS access maximum total cache length (ulong)

[code]

-

#6

My is

Code:

filename: /lib/modules/4.4.35-1-pve/kernel/fs/nfs/nfs.ko

license: GPL

author: Olaf Kirch <okir@monad.swb.de>

alias: nfs4

alias: fs-nfs4

alias: fs-nfs

srcversion: E8CE5FE61CAC2E37CFFD876

depends: fscache,sunrpc,lockd

intree: Y

vermagic: 4.4.35-1-pve SMP mod_unload modversions

parm: callback_tcpport:portnr

parm: nfs_idmap_cache_timeout:int

parm: nfs4_disable_idmapping:Turn off NFSv4 idmapping when using 'sec=sys' (bool)

parm: max_session_slots:Maximum number of outstanding NFSv4.1 requests the client will negotiate (ushort)

parm: send_implementation_id:Send implementation ID with NFSv4.1 exchange_id (ushort)

parm: nfs4_unique_id:nfs_client_id4 uniquifier string (string)

parm: recover_lost_locks:If the server reports that a lock might be lost, try to recover it risking data corruption. (bool)

parm: enable_ino64:bool

parm: nfs_access_max_cachesize:NFS access maximum total cache length (ulong)How can I upgrade to nfs newest version?

-

#7

My is

Code:

filename: /lib/modules/4.4.35-1-pve/kernel/fs/nfs/nfs.ko license: GPL author: Olaf Kirch <okir@monad.swb.de> alias: nfs4 alias: fs-nfs4 alias: fs-nfs srcversion: E8CE5FE61CAC2E37CFFD876 depends: fscache,sunrpc,lockd intree: Y vermagic: 4.4.35-1-pve SMP mod_unload modversions parm: callback_tcpport:portnr parm: nfs_idmap_cache_timeout:int parm: nfs4_disable_idmapping:Turn off NFSv4 idmapping when using 'sec=sys' (bool) parm: max_session_slots:Maximum number of outstanding NFSv4.1 requests the client will negotiate (ushort) parm: send_implementation_id:Send implementation ID with NFSv4.1 exchange_id (ushort) parm: nfs4_unique_id:nfs_client_id4 uniquifier string (string) parm: recover_lost_locks:If the server reports that a lock might be lost, try to recover it risking data corruption. (bool) parm: enable_ino64:bool parm: nfs_access_max_cachesize:NFS access maximum total cache length (ulong)How can I upgrade to nfs newest version?

strange i bet your modinfo would issue a error …

so to find out the module complaining «disagrees about version of symbol module_layout» … by dmesg

perhaps a «apt-get update && apt-get dist-upgrade » will solve your problem ….

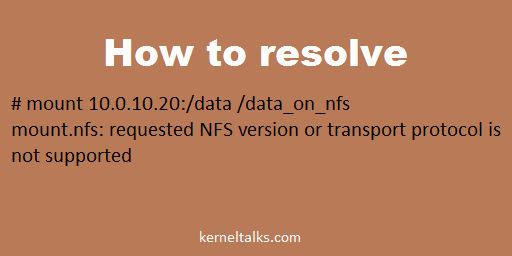

Troubleshooting error ‘mount.nfs: requested NFS version or transport protocol is not supported’ and how to resolve it.

Another troubleshooting article aimed at specific errors and help you how to solve it. In this article, we will see how to resolve error ‘mount.nfs: requested NFS version or transport protocol is not supported’ seen on NFS client while trying to mount NFS share.

# mount 10.0.10.20:/data /data_on_nfs mount.nfs: requested NFS version or transport protocol is not supported

Sometimes you see error mount.nfs: requested NFS version or transport protocol is not supported when you try to mount NFS share on NFS client. There are couple of reasons you see this error :

- NFS services are not running on NFS server

- NFS utils not installed on the client

- NFS service hung on NFS server

NFS services at the NFS server can be down or hung due to multiple reasons like server utilization, server reboot, etc.

You might be interested in reading :

- NFS configuration on Linux

- How to un-mount NFS share when NFS server is offline

Solution 1:

To get rid of this error and successfully mount your share follow the below steps.

Login to the NFS server and check the NFS services status.

[root@kerneltalks]# service nfs status rpc.svcgssd is stopped rpc.mountd is stopped nfsd is stopped rpc.rquotad is stopped

In the above output you can see the NFS services are stopped on the server. Start them.

[root@kerneltalks]# service nfs start Starting NFS services: [ OK ] Starting NFS quotas: [ OK ] Starting NFS mountd: [ OK ] Starting NFS daemon: [ OK ] Starting RPC idmapd: [ OK ]

You might want to check for nfs-server or nfsserver service as well depends on your Linux distro.

Now try to mount NFS share on the client. And you will be able to mount them using the same command we see earlier!

Solution 2 :

If that doesn’t work for you then try installing package nfs-utils on your server and you will get through this error.

Solution 3 :

Open file /etc/sysconfig/nfs and try to check below parameters

# Turn off v4 protocol support #RPCNFSDARGS="-N 4" # Turn off v2 and v3 protocol support #RPCNFSDARGS="-N 2 -N 3"

Removing hash from RPCNFSDARGS lines will turn off specific version support. This way clients with mentioned NFS versions won’t be able to connect to the NFS server for mounting share. If you have any of it enabled, try disabling it and mounting at the client after the NFS server service restarts.

Let us know if you have faced this error and solved it by any other methods in the comments below. We will update our article with your information to keep it updated and help the community live better!

I am running nfs4 only on both server and client. I am not sure what I changed recently, but I can no longer mount an exported file system which I used to be able to mount. I have just upgraded the client machine from Fedora 31 to 32… but I swear nfs was still working immediately after the upgrade.

At the client end I do:

# mount /foo

mount.nfs4: Protocol not supported

The /etc/fstab has not been changed. There is nothing already mounted at /foo. I get the same result issuing mount.nfs4 by hand.

Using wireshark at the client I can see absolutely nothing being sent to the nfs server (or being received from same). Using tcpdump I can see nothing at the server end, from before the client is rebooted to after the attempts to mount. So I’m guessing this is a client issue ?

I can see nothing in the logs. I have failed to find anything to wind up the logging level for client-side mounting.

Can anyone point me at ways to discover what the client is doing (or not doing) ?

As requested…

dmesg mentions of nfs|NFS:

[ 7.987799] systemd[1]: Starting Preprocess NFS configuration convertion...

[ 7.993220] systemd[1]: nfs-convert.service: Succeeded.

[ 7.993342] systemd[1]: Finished Preprocess NFS configuration convertion.

[ 12.484481] RPC: Registered tcp NFSv4.1 backchannel transport module.

And fstab on the client:

foo:/ /foo nfs4 noauto,sec=sys,proto=tcp,clientaddr=xx.xx.xx.xx,port=1001 0 0

The client has more than one IP. The server wishes to obscure the fact that it offers nfs. To make that easier it does nfs4 only. FWIW netstat on the server gives (edited for clarity):

Prot R-Q S-Q Local Address Foreign Address State PID/Program

tcp 0 0 xx.xx.xx.xx:1001 0.0.0.0:* LISTEN -

tcp 0 0 0.0.0.0:111 0.0.0.0:* LISTEN 1/systemd

tcp 0 0 0.0.0.0:1002 0.0.0.0:* LISTEN 815/rpc.statd

I thought nfs4 required just the one port… but systemd seems to wake up port 111 anyway. There is also rpc.statd.

The configuration of the server used to work… Also, the client is not sending anything to the server at all on any port !

And the exports on the server:

/ bar(fsid=0,no_subtree_check,sec=sys,rw,no_root_squash,insecure,crossmnt)

Where bar is in the server’s etchosts file.

I did showmount -e foo on the client:

clnt_create: RPC: Program not registered

Wireshark tells me that the client poked the server on port 111 asking for MOUNT (100005) Version 3 tcp and received ‘no’ response. The poke for udp received the same answer. Since the server is configured nfs4 only, I guess this is not a surprise ? I note that showmount does not ask for Version 4… but I don’t know if you’d expect it to ?

Aiming to mostly replicate the build from @Stux (with some mods, hopefully around about as good as that link)

- 4 xSamsung 850 EVO Basic (500GB, 2.5″) — — VMs/Jails

- 1 xASUS Z10PA-D8 (LGA 2011-v3, Intel C612 PCH, ATX) — — Dual socket MoBo

2 xWD Green 3D NAND (120GB, 2.5″) — — Boot drives (maybe mess around trying out the thread to put swap here too link)- 1 x Kingston UV400 120GB SSD — boot drive (hit the 3D NAND/TRIM bug with the original WD green selection, failing scrub and showing as corrupted OS files) Decided to go with no mirror and use the config backup script

- 2 xIntel Xeon E5-2620 v4 (LGA 2011-v3, 2.10GHz) — — 8 core/16 threads per Chip

- 2 xNoctua NH-U9S (12.50cm)

- 1 xCorsair HX1200 (1200W) — PSU to support 24 HDD + several SSD and PCI cards

- 4 xKingston Value RAM (32GB, DDR4-2400, ECC RDIMM 288)

- 2 xNoctua NF-A8 PWM Premium 80mm PC Computer Case Fan

3 xNoctua NF-F12 PWM Cooling Fan- 3 xNoctua NF-F12 PPC 3000 PWM (120mm) * having noted later in Stux’s thread that 1500 RPM is not sufficient to cool the HDDs. Corsair Commander Pro to control the fans (see script and code)

- 1 xNORCO 4U Rack Mount 24 x Hot-Swappable SATA/SAS 6G Drive Bays Server Rack mount RPC-4224

- 6 xCableCreation Internal Mini SAS HD Cable, Mini SAS SFF-8643 to Mini SAS 36Pin SFF-8087 Cable

- 1 xLSI Logic Controller Card 05-25699-00 9305-24i 24-Port SAS 12Gb/s PCI-Express 3.0 Host Bus Adapter

- TrueNAS Core 13.0-U3.1

- Use existing Drives 8 x10TB WD Red, 8 x4TB WD Purple, + a mix of WD Purple and shucked WD Elements 12TB x 8

ESXi-pfSense-FreeNAS-Docker host

CASE: Fractal Node 804

MB: ASUS x-99M WS

CPU: Xeon E5-2620v4 + Corsair H60 Cooler block

RAM: CRUCIAL 64GB DDR4-2133 ECC RDIMMs

HDD: WD RED 3TBx8

SSD: 4 xSamsung 850 EVO Basic (500GB, 2.5″) — — VMs/Jails

HBA: LSI 9300-16i

OS: 1 x Kingston UV400 120GB SSD — boot drive

PSU: Corsair RM1000

Version: TrueNAS CORE 13.0 -U3.1

FANS: 3xFractal R3 120mm — 3 Front, 1 Rear. Corsair Commander Pro to control the fans (see script and code)

CPU FAN: 1xCorsair H60 CPU Radiator — Front

NIC: Intel EXPI9402PTBLK Pro, Dual-Gigabit Adapter (plus the 2 onboard Intel NICs, 1x 210, 1x 218)

VM/Docker host, using ESXi and running pfSense alongside FreeNAS (separate Dual Intel NIC added, dedicated to the pfSense VM)

Other Systems

TrueNAS CORE test system:

CASE: Old Silverstone HTPC case

MB: ASUS x-99M WS

CPU: Xeon E5-2620v4 + Corsair H60 Cooler block

RAM: CRUCIAL 32GB DDR4-2133 ECC RDIMMs

HDD: WD RED 8TBx3

OS: 1 x Kingston UV400 120GB SSD — boot drive

PSU: Corsair RM1000

Version: TrueNAS CORE 13.0-U3

SCALE Cluster:

2x Intel NUCs running TrueNAS SCALE 22.12 RC1

64GB RAM

10th Generation Intel i7

Samsung NVME SSD 1TB, QVO SSD 1TB

Boot from Samsung Portable T7 SSD USBC

CASE: Fractal Node 304 running TrueNAS SCALE 22.12 RC1

MB: ASUS P10S-I Series

RAM: 32 GB

CPU: Intel(R) Xeon(R) CPU E3-1240L v5 @ 2.10GHz

HDD: 3 WD REDs and a few SSDs

PSU: Fractal ION 2+ 650W