I want to download image file from a url using python module «urllib.request», which works for some website (e.g. mangastream.com), but does not work for another (mangadoom.co) receiving error «HTTP Error 403: Forbidden». What could be the problem for the latter case and how to fix it?

I am using python3.4 on OSX.

import urllib.request

# does not work

img_url = 'http://mangadoom.co/wp-content/manga/5170/886/005.png'

img_filename = 'my_img.png'

urllib.request.urlretrieve(img_url, img_filename)

At the end of error message it said:

...

HTTPError: HTTP Error 403: Forbidden

However, it works for another website

# work

img_url = 'http://img.mangastream.com/cdn/manga/51/3140/006.png'

img_filename = 'my_img.png'

urllib.request.urlretrieve(img_url, img_filename)

I have tried the solutions from the post below, but none of them works on mangadoom.co.

Downloading a picture via urllib and python

How do I copy a remote image in python?

The solution here also does not fit because my case is to download image.

urllib2.HTTPError: HTTP Error 403: Forbidden

Non-python solution is also welcome. Your suggestion will be very appreciated.

asked Jan 9, 2016 at 9:52

2

This website is blocking the user-agent used by urllib, so you need to change it in your request. Unfortunately I don’t think urlretrieve supports this directly.

I advise for the use of the beautiful requests library, the code becomes (from here) :

import requests

import shutil

r = requests.get('http://mangadoom.co/wp-content/manga/5170/886/005.png', stream=True)

if r.status_code == 200:

with open("img.png", 'wb') as f:

r.raw.decode_content = True

shutil.copyfileobj(r.raw, f)

Note that it seems this website does not forbide requests user-agent. But if need to be modified it is easy :

r = requests.get('http://mangadoom.co/wp-content/manga/5170/886/005.png',

stream=True, headers={'User-agent': 'Mozilla/5.0'})

Also relevant : changing user-agent in urllib

answered Jan 9, 2016 at 15:07

You can build an opener. Here’s the example:

import urllib.request

opener=urllib.request.build_opener()

opener.addheaders=[('User-Agent','Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/36.0.1941.0 Safari/537.36')]

urllib.request.install_opener(opener)

url=''

local=''

urllib.request.urlretrieve(url,local)

By the way, the following codes are the same:

(none-opener)

req=urllib.request.Request(url,data,hdr)

html=urllib.request.urlopen(req)

(opener builded)

html=operate.open(url,data,timeout)

However, we are not able to add header when we use:

urllib.request.urlretrieve()

So in this case, we have to build an opener.

answered Apr 16, 2016 at 12:05

er.Zhuer.Zhu

3513 silver badges3 bronze badges

I try wget with the url in terminal and it works:

wget -O out_005.png http://mangadoom.co/wp-content/manga/5170/886/005.png

so my way around is to use the script below, and it works too.

import os

out_image = 'out_005.png'

url = 'http://mangadoom.co/wp-content/manga/5170/886/005.png'

os.system("wget -O {0} {1}".format(out_image, url))

answered Jan 10, 2016 at 6:52

neobotneobot

1,1801 gold badge12 silver badges19 bronze badges

0

The urllib module can be used to make an HTTP request from a site, unlike the requests library, which is a built-in library. This reduces dependencies. In the following article, we will discuss why urllib.error.httperror: http error 403: forbidden occurs and how to resolve it.

What is a 403 error?

The 403 error pops up when a user tries to access a forbidden page or, in other words, the page they aren’t supposed to access. 403 is the HTTP status code that the webserver uses to denote the kind of problem that has occurred on the user or the server end. For instance, 200 is the status code for – ‘everything has worked as expected, no errors’. You can go through the other HTTP status code from here.

ModSecurity is a module that protects websites from foreign attacks. It checks whether the requests are being made from a user or from an automated bot. It blocks requests from known spider/bot agents who are trying to scrape the site. Since the urllib library uses something like python urllib/3.3.0 hence, it is easily detected as non-human and therefore gets blocked by mod security.

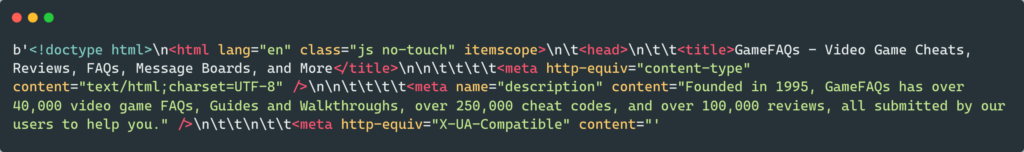

from urllib import request from urllib.request import Request, urlopen url = "https://www.gamefaqs.com" request_site = Request(url) webpage = urlopen(request_site).read() print(webpage[:200])

ModSecurity blocks the request and returns an HTTP error 403: forbidden error if the request was made without a valid user agent. A user-agent is a header that permits a specific string which in turn allows network protocol peers to identify the following:

- The Operating System, for instance, Windows, Linux, or macOS.

- Websserver’s browser

Moreover, the browser sends the user agent to each and every website that you get connected to. The user-Agent field is included in the HTTP header when the browser gets connected to a website. The header field data differs for each browser.

Why do sites use security that sends 403 responses?

According to a survey, more than 50% of internet traffic comes from automated sources. Automated sources can be scrapers or bots. Therefore it gets necessary to prevent these attacks. Moreover, scrapers tend to send multiple requests, and sites have some rate limits. The rate limit dictates how many requests a user can make. If the user(here scraper) exceeds it, it gets some kind of error, for instance, urllib.error.httperror: http error 403: forbidden.

Resolving urllib.error.httperror: http error 403: forbidden?

This error is caused due to mod security detecting the scraping bot of the urllib and blocking it. Therefore, in order to resolve it, we have to include user-agent/s in our scraper. This will ensure that we can safely scrape the website without getting blocked and running across an error. Let’s take a look at two ways to avoid urllib.error.httperror: http error 403: forbidden.

Method 1: using user-agent

from urllib import request

from urllib.request import Request, urlopen

url = "https://www.gamefaqs.com"

request_site = Request(url, headers={"User-Agent": "Mozilla/5.0"})

webpage = urlopen(request_site).read()

print(webpage[:500])

- In the code above, we have added a new parameter called headers which has a user-agent Mozilla/5.0. Details about the user’s device, OS, and browser are given by the webserver by the user-agent string. This prevents the bot from being blocked by the site.

- For instance, the user agent string gives information to the server that you are using Brace browser and Linux OS on your computer. Thereafter, the server accordingly sends the information.

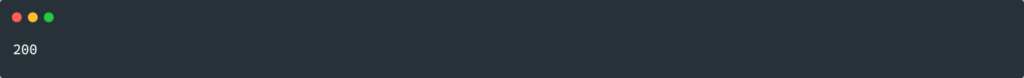

Method 2: using Session object

There are times when even using user-agent won’t prevent the urllib.error.httperror: http error 403: forbidden. Then we can use the Session object of the request module. For instance:

from random import seed

import requests

url = "https://www.gamefaqs.com"

session_obj = requests.Session()

response = session_obj.get(url, headers={"User-Agent": "Mozilla/5.0"})

print(response.status_code)

The site could be using cookies as a defense mechanism against scraping. It’s possible the site is setting and requesting cookies to be echoed back as a defense against scraping, which might be against its policy.

The Session object is compatible with cookies.

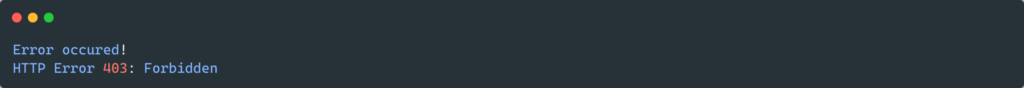

Catching urllib.error.httperror

urllib.error.httperror can be caught using the try-except method. Try-except block can capture any exception, which can be hard to debug. For instance, it can capture exceptions like SystemExit and KeyboardInterupt. Let’s see how can we do this, for instance:

from urllib.request import Request, urlopen

from urllib.error import HTTPError

url = "https://www.gamefaqs.com"

try:

request_site = Request(url)

webpage = urlopen(request_site).read()

print(webpage[:500])

except HTTPError as e:

print("Error occured!")

print(e)

FAQs

How to fix the 403 error in the browser?

You can try the following steps in order to resolve the 403 error in the browser try refreshing the page, rechecking the URL, clearing the browser cookies, check your user credentials.

Why do scraping modules often get 403 errors?

Scrapers often don’t use headers while requesting information. This results in their detection by the mod security. Hence, scraping modules often get 403 errors.

Conclusion

In this article, we covered why and when urllib.error.httperror: http error 403: forbidden it can occur. Moreover, we looked at the different ways to resolve the error. We also covered the handling of this error.

Trending Now

-

“Other Commands Don’t Work After on_message” in Discord Bots

●February 5, 2023

-

Botocore.Exceptions.NoCredentialsError: Unable to Locate Credentials

by Rahul Kumar Yadav●February 5, 2023

-

[Resolved] NameError: Name _mysql is Not Defined

by Rahul Kumar Yadav●February 5, 2023

-

Best Ways to Implement Regex New Line in Python

by Rahul Kumar Yadav●February 5, 2023

This download image from the URL example will show you how to use the python urllib module, requests module, and wget module to download an image file from an image URL. You will find this example code is simple and clear. Below example code can also be used to download any web resources with a URL.

1. Use Python urllib Module To Implement Download Image From URL Example.

- Enter python interactive console by running

pythonorpython3command in a terminal, then run the below source code in it.>>> import urllib.request >>> >>> image_url = "https://www.dev2qa.com/demo/images/green_button.jpg" >>> >>> urllib.request.urlretrieve(image_url, 'local_image.jpg') Traceback (most recent call last): File "<stdin>", line 1, in <module> File "/Users/songzhao/opt/anaconda3/lib/python3.7/urllib/request.py", line 247, in urlretrieve with contextlib.closing(urlopen(url, data)) as fp: File "/Users/songzhao/opt/anaconda3/lib/python3.7/urllib/request.py", line 222, in urlopen return opener.open(url, data, timeout) File "/Users/songzhao/opt/anaconda3/lib/python3.7/urllib/request.py", line 531, in open response = meth(req, response) File "/Users/songzhao/opt/anaconda3/lib/python3.7/urllib/request.py", line 641, in http_response 'http', request, response, code, msg, hdrs) File "/Users/songzhao/opt/anaconda3/lib/python3.7/urllib/request.py", line 569, in error return self._call_chain(*args) File "/Users/songzhao/opt/anaconda3/lib/python3.7/urllib/request.py", line 503, in _call_chain result = func(*args) File "/Users/songzhao/opt/anaconda3/lib/python3.7/urllib/request.py", line 649, in http_error_default raise HTTPError(req.full_url, code, msg, hdrs, fp) urllib.error.HTTPError: HTTP Error 403: Forbidden - But you will find above source code will throw an error message urllib.error.HTTPError: HTTP Error 403: Forbidden. This is because the web server rejects the HTTPS request sent from urllib.request.urlretrieve() method which does not provide a User-Agent header ( The urllib.request.urlretrieve() method does not send a User-Agent header to the webserver ).

- So we should change the above source code to the below which can send the User-Agent header to the webserver then the HTTPS request can be completed successfully.

>>> from urllib.request import urlopen, Request >>> # Simulate a User-Agent header value. >>> headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/41.0.2228.0 Safari/537.3'} >>> >>> image_url = "https://www.dev2qa.com/demo/images/green_button.jpg" >>> >>> local_file = open('local_image_1.jpg','wb') >>> # Send request with simulated User-Agent header. >>> req = Request(url=image_url, headers=headers) >>> >>> with urlopen(req) as response: ... local_file.write(response.read()) ... 5486

2. Use Python requests Module To Implement Download Image From URL Example.

- Open a terminal, and run command

pythonorpython3to enter the python interactive command console. - Run below example code in the above python interactive command console. The example image URL is https://www.dev2qa.com/demo/images/green_button.jpg. After running the below python code, it will download the image and save it to a local file local_image.jpg.

# Import python requests, shutil module. import requests import shutil # This is the image url. image_url = "https://www.dev2qa.com/demo/images/green_button.jpg" # Open the url image, set stream to True, this will return the stream content. resp = requests.get(image_url, stream=True) # Open a local file with wb ( write binary ) permission. local_file = open('local_image.jpg', 'wb') # Set decode_content value to True, otherwise the downloaded image file's size will be zero. resp.raw.decode_content = True # Copy the response stream raw data to local image file. shutil.copyfileobj(resp.raw, local_file) # Remove the image url response object. del resp - Above python source code use both the python requests and shutil module. But you can use the python requests module only to implement it also like below source code.

>>> import requests >>> >>> local_file = open('local_file.png','wb') >>> >>> image_url = "https://www.dev2qa.com/demo/images/green_button.jpg" >>> >>> resp = requests.get(image_url, stream=True) >>> >>> local_file.write(resp.content) 5486 >>> local_file.close()

3. Use Python Wget Module To Implement Download Image From URL Example.

- Besides the python requests module, the python wget module can also be used to download images from URL to local file easily. Below are the steps about how to use it.

- Open a terminal and run

pip show wgetto check whether the python wget module has been installed or not. - If the python wget module has not been installed, then run the command

pip install wgetin the terminal to install it.$ pip install wget Collecting wget Downloading https://files.pythonhosted.org/packages/47/6a/62e288da7bcda82b935ff0c6cfe542970f04e29c756b0e147251b2fb251f/wget-3.2.zip Installing collected packages: wget Running setup.py install for wget ... done Successfully installed wget-3.2

- Run

pythonorpython3to enter the python interactive command console. - Run below python code in the above python interactive command console.

# First import python wget module. >>> import wget >>> image_url = 'https://www.dev2qa.com/demo/images/green_button.jpg' # Invoke wget download method to download specified url image. >>> local_image_filename = wget.download(image_url) 100% [................................................] 829882 / 829882> # Print out local image file name. >> local_image_filename 'green_button.jpg'

References

- Python urllib.request module.

- Python requests module.

- Python wget module.

- the

urllibModule in Python - Check

robots.txtto PreventurllibHTTP Error 403 Forbidden Message - Adding Cookie to the Request Headers to Solve

urllibHTTP Error 403 Forbidden Message - Use Session Object to Solve

urllibHTTP Error 403 Forbidden Message

Today’s article explains how to deal with an error message (exception), urllib.error.HTTPError: HTTP Error 403: Forbidden, produced by the error class on behalf of the request classes when it faces a forbidden resource.

the urllib Module in Python

The urllib Python module handles URLs for python via different protocols. It is famous for web scrapers who want to obtain data from a particular website.

The urllib contains classes, methods, and functions that perform certain operations such as reading, parsing URLs, and robots.txt. There are four classes, request, error, parse, and robotparser.

Check robots.txt to Prevent urllib HTTP Error 403 Forbidden Message

When using the urllib module to interact with clients or servers via the request class, we might experience specific errors. One of those errors is the HTTP 403 error.

We get urllib.error.HTTPError: HTTP Error 403: Forbidden error message in urllib package while reading a URL. The HTTP 403, the Forbidden Error, is an HTTP status code that indicates that the client or server forbids access to a requested resource.

Therefore, when we see this kind of error message, urllib.error.HTTPError: HTTP Error 403: Forbidden, the server understands the request but decides not to process or authorize the request that we sent.

To understand why the website we are accessing is not processing our request, we need to check an important file, robots.txt. Before web scraping or interacting with a website, it is often advised to review this file to know what to expect and not face any further troubles.

To check it on any website, we can follow the format below.

https://<website.com>/robots.txt

For example, check YouTube, Amazon, and Google robots.txt files.

https://www.youtube.com/robots.txt

https://www.amazon.com/robots.txt

https://www.google.com/robots.txt

Checking YouTube robots.txt gives the following result.

# robots.txt file for YouTube

# Created in the distant future (the year 2000) after

# the robotic uprising of the mid-'90s wiped out all humans.

User-agent: Mediapartners-Google*

Disallow:

User-agent: *

Disallow: /channel/*/community

Disallow: /comment

Disallow: /get_video

Disallow: /get_video_info

Disallow: /get_midroll_info

Disallow: /live_chat

Disallow: /login

Disallow: /results

Disallow: /signup

Disallow: /t/terms

Disallow: /timedtext_video

Disallow: /user/*/community

Disallow: /verify_age

Disallow: /watch_ajax

Disallow: /watch_fragments_ajax

Disallow: /watch_popup

Disallow: /watch_queue_ajax

Sitemap: https://www.youtube.com/sitemaps/sitemap.xml

Sitemap: https://www.youtube.com/product/sitemap.xml

We can notice a lot of Disallow tags there. This Disallow tag shows the website’s area, which is not accessible. Therefore, any request to those areas will not be processed and is forbidden.

In other robots.txt files, we might see an Allow tag. For example, http://youtube.com/comment is forbidden to any external request, even with the urllib module.

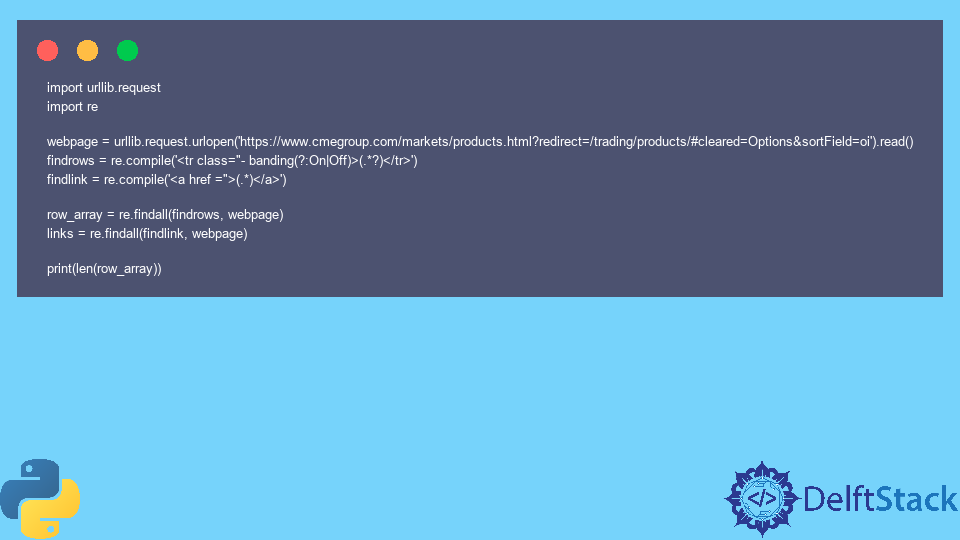

Let’s write code to scrape data from a website that returns an HTTP 403 error when accessed.

Example Code:

import urllib.request

import re

webpage = urllib.request.urlopen('https://www.cmegroup.com/markets/products.html?redirect=/trading/products/#cleared=Options&sortField=oi').read()

findrows = re.compile('<tr class="- banding(?:On|Off)>(.*?)</tr>')

findlink = re.compile('<a href =">(.*)</a>')

row_array = re.findall(findrows, webpage)

links = re.findall(findlink, webpage)

print(len(row_array))

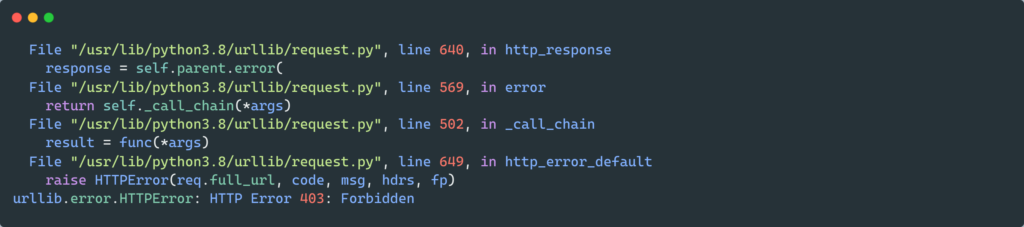

Output:

Traceback (most recent call last):

File "c:UsersakinlDocumentsPythonindex.py", line 7, in <module>

webpage = urllib.request.urlopen('https://www.cmegroup.com/markets/products.html?redirect=/trading/products/#cleared=Options&sortField=oi').read()

File "C:Python310liburllibrequest.py", line 216, in urlopen

return opener.open(url, data, timeout)

File "C:Python310liburllibrequest.py", line 525, in open

response = meth(req, response)

File "C:Python310liburllibrequest.py", line 634, in http_response

response = self.parent.error(

File "C:Python310liburllibrequest.py", line 563, in error

return self._call_chain(*args)

File "C:Python310liburllibrequest.py", line 496, in _call_chain

result = func(*args)

File "C:Python310liburllibrequest.py", line 643, in http_error_default

raise HTTPError(req.full_url, code, msg, hdrs, fp)

urllib.error.HTTPError: HTTP Error 403: Forbidden

The reason is that we are forbidden from accessing the website. However, if we check the robots.txt file, we will notice that https://www.cmegroup.com/markets/ is not with a Disallow tag. However, if we go down the robots.txt file for the website we wanted to scrape, we will find the below.

User-agent: Python-urllib/1.17

Disallow: /

The above text means that the user agent named Python-urllib is not allowed to crawl any URL within the site. That means using the Python urllib module is not allowed to crawl the site.

Therefore, check or parse the robots.txt to know what resources we have access to. we can parse robots.txt file using the robotparser class. These can prevent our code from experiencing an urllib.error.HTTPError: HTTP Error 403: Forbidden error message.

Passing a valid user agent as a header parameter will quickly fix the problem. The website may use cookies as an anti-scraping measure.

The website may set and ask for cookies to be echoed back to prevent scraping, which is maybe against its policy.

from urllib.request import Request, urlopen

def get_page_content(url, head):

req = Request(url, headers=head)

return urlopen(req)

url = 'https://example.com'

head = {

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_14_6) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/99.0.4844.84 Safari/537.36',

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9',

'Accept-Charset': 'ISO-8859-1,utf-8;q=0.7,*;q=0.3',

'Accept-Encoding': 'none',

'Accept-Language': 'en-US,en;q=0.8',

'Connection': 'keep-alive',

'refere': 'https://example.com',

'cookie': """your cookie value ( you can get that from your web page) """

}

data = get_page_content(url, head).read()

print(data)

Output:

<!doctype html>n<html>n<head>n <title>Example Domain</title>nn <meta

'

'

'

<p><a href="https://www.iana.org/domains/example">More information...</a></p>n</div>n</body>n</html>n'

Passing a valid user agent as a header parameter will quickly fix the problem.

Use Session Object to Solve urllib HTTP Error 403 Forbidden Message

Sometimes, even using a user agent won’t stop this error from occurring. The Session object of the requests module can then be used.

from random import seed

import requests

url = "https://stackoverflow.com/search?q=html+error+403"

session_obj = requests.Session()

response = session_obj.get(url, headers={"User-Agent": "Mozilla/5.0"})

print(response.status_code)

Output:

The above article finds the cause of the urllib.error.HTTPError: HTTP Error 403: Forbidden and the solution to handle it. mod_security basically causes this error as different web pages use different security mechanisms to differentiate between human and automated computers (bots).

The urllib.error.httperror: http error 403: forbidden occurs when you try to scrap a webpage using urllib.request module and the mod_security blocks the request. There are several reasons why you get this error. Let’s take a look at each of the use cases in detail.

Usually, the websites are protected with App Gateway, WAF rules, etc., which monitor whether the requests are from the actual users or triggered through the automated bot system. The mod_security or the WAF rule will block these requests treating them as spider/bot requests. These security features are the most standard ones to prevent DDOS attacks on the server.

Now coming back to the error when you make a request to any site using urllib.request basically, you will not set any user-agents and headers and by default the urllib sets something like python urllib/3.3.0, which is easily detected by the mod_security.

The mod_security is usually configured in such a way that if any requests happen without a valid user-agent header(browser user-agent), the mod_security will block the request and return the urllib.error.httperror: http error 403: forbidden

Example of 403 forbidden error

from urllib.request import Request, urlopen

req = Request('http://www.cmegroup.com/')

webpage = urlopen(req).read()Output

File "C:UsersuserAppDataLocalProgramsPythonPython39liburllibrequest.py", line 494, in _call_chain

result = func(*args)

urllib.error.HTTPError: HTTP Error 403: Forbidden

PS C:ProjectsTryouts> from urllib.request import Request, urlopenThe easy way to resolve the error is by passing a valid user-agent as a header parameter, as shown below.

from urllib.request import Request, urlopen

req = Request('https://www.yahoo.com', headers={'User-Agent': 'Mozilla/5.0'})

webpage = urlopen(req).read()

Alternatively, you can even set a timeout if you are not getting the response from the website. Python will raise a socket exception if the website doesn’t respond within the mentioned timeout period.

from urllib.request import Request, urlopen

req = Request('http://www.cmegroup.com/', headers={'User-Agent': 'Mozilla/5.0'})

webpage = urlopen(req,timeout=10).read()

In some cases, like getting a real-time bitcoin or stock market value, you will send requests every second, and the servers can block if there are too many requests coming from the same IP address and throws 403 security error.

If you get this error because of too many requests, consider adding delay between each request to resolve the error.

Srinivas Ramakrishna is a Solution Architect and has 14+ Years of Experience in the Software Industry. He has published many articles on Medium, Hackernoon, dev.to and solved many problems in StackOverflow. He has core expertise in various technologies such as Microsoft .NET Core, Python, Node.JS, JavaScript, Cloud (Azure), RDBMS (MSSQL), React, Powershell, etc.

Sign Up for Our Newsletters

Subscribe to get notified of the latest articles. We will never spam you. Be a part of our ever-growing community.

By checking this box, you confirm that you have read and are agreeing to our terms of use regarding the storage of the data submitted through this form.

Python is a rather easy-to-learn but powerful language enough to be used in a variety of applications ranging from AI and Machine Learning all the way to something as simple as creating web scraping bots.

That said, random bugs and glitches are still the order of the day in a Python programmer’s life. In this article, we’re talking about the “urllib.error.httperror: HTTP error 403: Forbidden” when trying to scrape sites with Python and what you can to do fix the problem.

Also read: Is Python case sensitive when dealing with identifiers?

Why does this happen?

While the error can be triggered by anything from a simple runtime error in the script to server issues on the website, the most likely reason is the presence of some sort of server security feature to prevent bots or spiders from crawling the site. In this case, the security feature might be blocking urllib, a library used to send requests to websites.

How to fix this?

Here are two fixes you can try out.

Disable mod_security or equivalent security features

As mentioned before, server-side security features can cause problems with web scrapers. Try setting your browser agent as follows to see if you can avoid the issue.

from urllib.request import Request, urlopen

req = Request(

url='enter request URL here',

headers={'User-Agent': 'Mozilla/5.0'}

)

webpage = urlopen(req).read()

A correctly defined browser agent should be able to scrape data from any site.

Set a timeout

If you aren’t getting a response, try setting a timeout to prevent the server from mistaking your bot for a DDoS attacking and hence blocking all requests altogether.

from urllib.request import Request,

urlopen req = Request('enter request URL here', headers={'User-Agent': 'Mozilla/5.0'})

webpage = urlopen(req,timeout=10).read()The aforementioned example sets a 10-second timeout between requests to not overload the server while maintaining good request frequency.

Also read: How to fix Javascript error: ipython is not defined?

Someone who writes/edits/shoots/hosts all things tech and when he’s not, streams himself racing virtual cars.

You can contact him here: [email protected]

Содержание

- HOWTO Fetch Internet Resources Using The urllib Package¶

- Introduction¶

- Fetching URLs¶

- Data¶

- Headers¶

- Handling Exceptions¶

- URLError¶

- HTTPError¶

- Error Codes¶

- Wrapping it Up¶

- Number 1В¶

- Number 2В¶

- info and geturl¶

- Openers and Handlers¶

- Basic Authentication¶

- Proxies¶

- Sockets and Layers¶

- Footnotes¶

HOWTO Fetch Internet Resources Using The urllib Package¶

There is a French translation of an earlier revision of this HOWTO, available at urllib2 — Le Manuel manquant.

Introduction¶

You may also find useful the following article on fetching web resources with Python:

A tutorial on Basic Authentication, with examples in Python.

urllib.request is a Python module for fetching URLs (Uniform Resource Locators). It offers a very simple interface, in the form of the urlopen function. This is capable of fetching URLs using a variety of different protocols. It also offers a slightly more complex interface for handling common situations — like basic authentication, cookies, proxies and so on. These are provided by objects called handlers and openers.

urllib.request supports fetching URLs for many “URL schemes” (identified by the string before the «:» in URL — for example «ftp» is the URL scheme of «ftp://python.org/» ) using their associated network protocols (e.g. FTP, HTTP). This tutorial focuses on the most common case, HTTP.

For straightforward situations urlopen is very easy to use. But as soon as you encounter errors or non-trivial cases when opening HTTP URLs, you will need some understanding of the HyperText Transfer Protocol. The most comprehensive and authoritative reference to HTTP is RFC 2616. This is a technical document and not intended to be easy to read. This HOWTO aims to illustrate using urllib, with enough detail about HTTP to help you through. It is not intended to replace the urllib.request docs, but is supplementary to them.

Fetching URLs¶

The simplest way to use urllib.request is as follows:

If you wish to retrieve a resource via URL and store it in a temporary location, you can do so via the shutil.copyfileobj() and tempfile.NamedTemporaryFile() functions:

Many uses of urllib will be that simple (note that instead of an вЂhttp:’ URL we could have used a URL starting with вЂftp:’, вЂfile:’, etc.). However, it’s the purpose of this tutorial to explain the more complicated cases, concentrating on HTTP.

HTTP is based on requests and responses — the client makes requests and servers send responses. urllib.request mirrors this with a Request object which represents the HTTP request you are making. In its simplest form you create a Request object that specifies the URL you want to fetch. Calling urlopen with this Request object returns a response object for the URL requested. This response is a file-like object, which means you can for example call .read() on the response:

Note that urllib.request makes use of the same Request interface to handle all URL schemes. For example, you can make an FTP request like so:

In the case of HTTP, there are two extra things that Request objects allow you to do: First, you can pass data to be sent to the server. Second, you can pass extra information (“metadata”) about the data or about the request itself, to the server — this information is sent as HTTP “headers”. Let’s look at each of these in turn.

Data¶

Sometimes you want to send data to a URL (often the URL will refer to a CGI (Common Gateway Interface) script or other web application). With HTTP, this is often done using what’s known as a POST request. This is often what your browser does when you submit a HTML form that you filled in on the web. Not all POSTs have to come from forms: you can use a POST to transmit arbitrary data to your own application. In the common case of HTML forms, the data needs to be encoded in a standard way, and then passed to the Request object as the data argument. The encoding is done using a function from the urllib.parse library.

Note that other encodings are sometimes required (e.g. for file upload from HTML forms — see HTML Specification, Form Submission for more details).

If you do not pass the data argument, urllib uses a GET request. One way in which GET and POST requests differ is that POST requests often have “side-effects”: they change the state of the system in some way (for example by placing an order with the website for a hundredweight of tinned spam to be delivered to your door). Though the HTTP standard makes it clear that POSTs are intended to always cause side-effects, and GET requests never to cause side-effects, nothing prevents a GET request from having side-effects, nor a POST requests from having no side-effects. Data can also be passed in an HTTP GET request by encoding it in the URL itself.

This is done as follows:

Notice that the full URL is created by adding a ? to the URL, followed by the encoded values.

We’ll discuss here one particular HTTP header, to illustrate how to add headers to your HTTP request.

Some websites 1 dislike being browsed by programs, or send different versions to different browsers 2. By default urllib identifies itself as Python-urllib/x.y (where x and y are the major and minor version numbers of the Python release, e.g. Python-urllib/2.5 ), which may confuse the site, or just plain not work. The way a browser identifies itself is through the User-Agent header 3. When you create a Request object you can pass a dictionary of headers in. The following example makes the same request as above, but identifies itself as a version of Internet Explorer 4.

The response also has two useful methods. See the section on info and geturl which comes after we have a look at what happens when things go wrong.

Handling Exceptions¶

urlopen raises URLError when it cannot handle a response (though as usual with Python APIs, built-in exceptions such as ValueError , TypeError etc. may also be raised).

HTTPError is the subclass of URLError raised in the specific case of HTTP URLs.

The exception classes are exported from the urllib.error module.

URLError¶

Often, URLError is raised because there is no network connection (no route to the specified server), or the specified server doesn’t exist. In this case, the exception raised will have a вЂreason’ attribute, which is a tuple containing an error code and a text error message.

HTTPError¶

Every HTTP response from the server contains a numeric “status code”. Sometimes the status code indicates that the server is unable to fulfil the request. The default handlers will handle some of these responses for you (for example, if the response is a “redirection” that requests the client fetch the document from a different URL, urllib will handle that for you). For those it can’t handle, urlopen will raise an HTTPError . Typical errors include вЂ404’ (page not found), вЂ403’ (request forbidden), and вЂ401’ (authentication required).

See section 10 of RFC 2616 for a reference on all the HTTP error codes.

The HTTPError instance raised will have an integer вЂcode’ attribute, which corresponds to the error sent by the server.

Error Codes¶

Because the default handlers handle redirects (codes in the 300 range), and codes in the 100–299 range indicate success, you will usually only see error codes in the 400–599 range.

http.server.BaseHTTPRequestHandler.responses is a useful dictionary of response codes in that shows all the response codes used by RFC 2616. The dictionary is reproduced here for convenience

When an error is raised the server responds by returning an HTTP error code and an error page. You can use the HTTPError instance as a response on the page returned. This means that as well as the code attribute, it also has read, geturl, and info, methods as returned by the urllib.response module:

Wrapping it Up¶

So if you want to be prepared for HTTPError or URLError there are two basic approaches. I prefer the second approach.

Number 1В¶

The except HTTPError must come first, otherwise except URLError will also catch an HTTPError .

Number 2В¶

info and geturl¶

The response returned by urlopen (or the HTTPError instance) has two useful methods info() and geturl() and is defined in the module urllib.response ..

geturl — this returns the real URL of the page fetched. This is useful because urlopen (or the opener object used) may have followed a redirect. The URL of the page fetched may not be the same as the URL requested.

info — this returns a dictionary-like object that describes the page fetched, particularly the headers sent by the server. It is currently an http.client.HTTPMessage instance.

Typical headers include вЂContent-length’, вЂContent-type’, and so on. See the Quick Reference to HTTP Headers for a useful listing of HTTP headers with brief explanations of their meaning and use.

Openers and Handlers¶

When you fetch a URL you use an opener (an instance of the perhaps confusingly named urllib.request.OpenerDirector ). Normally we have been using the default opener — via urlopen — but you can create custom openers. Openers use handlers. All the “heavy lifting” is done by the handlers. Each handler knows how to open URLs for a particular URL scheme (http, ftp, etc.), or how to handle an aspect of URL opening, for example HTTP redirections or HTTP cookies.

You will want to create openers if you want to fetch URLs with specific handlers installed, for example to get an opener that handles cookies, or to get an opener that does not handle redirections.

To create an opener, instantiate an OpenerDirector , and then call .add_handler(some_handler_instance) repeatedly.

Alternatively, you can use build_opener , which is a convenience function for creating opener objects with a single function call. build_opener adds several handlers by default, but provides a quick way to add more and/or override the default handlers.

Other sorts of handlers you might want to can handle proxies, authentication, and other common but slightly specialised situations.

install_opener can be used to make an opener object the (global) default opener. This means that calls to urlopen will use the opener you have installed.

Opener objects have an open method, which can be called directly to fetch urls in the same way as the urlopen function: there’s no need to call install_opener , except as a convenience.

Basic Authentication¶

To illustrate creating and installing a handler we will use the HTTPBasicAuthHandler . For a more detailed discussion of this subject – including an explanation of how Basic Authentication works — see the Basic Authentication Tutorial.

When authentication is required, the server sends a header (as well as the 401 error code) requesting authentication. This specifies the authentication scheme and a вЂrealm’. The header looks like: WWW-Authenticate: SCHEME realm=»REALM» .

The client should then retry the request with the appropriate name and password for the realm included as a header in the request. This is вЂbasic authentication’. In order to simplify this process we can create an instance of HTTPBasicAuthHandler and an opener to use this handler.

The HTTPBasicAuthHandler uses an object called a password manager to handle the mapping of URLs and realms to passwords and usernames. If you know what the realm is (from the authentication header sent by the server), then you can use a HTTPPasswordMgr . Frequently one doesn’t care what the realm is. In that case, it is convenient to use HTTPPasswordMgrWithDefaultRealm . This allows you to specify a default username and password for a URL. This will be supplied in the absence of you providing an alternative combination for a specific realm. We indicate this by providing None as the realm argument to the add_password method.

The top-level URL is the first URL that requires authentication. URLs “deeper” than the URL you pass to .add_password() will also match.

In the above example we only supplied our HTTPBasicAuthHandler to build_opener . By default openers have the handlers for normal situations – ProxyHandler (if a proxy setting such as an http_proxy environment variable is set), UnknownHandler , HTTPHandler , HTTPDefaultErrorHandler , HTTPRedirectHandler , FTPHandler , FileHandler , DataHandler , HTTPErrorProcessor .

top_level_url is in fact either a full URL (including the вЂhttp:’ scheme component and the hostname and optionally the port number) e.g. «http://example.com/» or an “authority” (i.e. the hostname, optionally including the port number) e.g. «example.com» or «example.com:8080» (the latter example includes a port number). The authority, if present, must NOT contain the “userinfo” component — for example «joe:password@example.com» is not correct.

Proxies¶

urllib will auto-detect your proxy settings and use those. This is through the ProxyHandler , which is part of the normal handler chain when a proxy setting is detected. Normally that’s a good thing, but there are occasions when it may not be helpful 5. One way to do this is to setup our own ProxyHandler , with no proxies defined. This is done using similar steps to setting up a Basic Authentication handler:

Currently urllib.request does not support fetching of https locations through a proxy. However, this can be enabled by extending urllib.request as shown in the recipe 6.

HTTP_PROXY will be ignored if a variable REQUEST_METHOD is set; see the documentation on getproxies() .

Sockets and Layers¶

The Python support for fetching resources from the web is layered. urllib uses the http.client library, which in turn uses the socket library.

As of Python 2.3 you can specify how long a socket should wait for a response before timing out. This can be useful in applications which have to fetch web pages. By default the socket module has no timeout and can hang. Currently, the socket timeout is not exposed at the http.client or urllib.request levels. However, you can set the default timeout globally for all sockets using

This document was reviewed and revised by John Lee.

Google for example.

Browser sniffing is a very bad practice for website design — building sites using web standards is much more sensible. Unfortunately a lot of sites still send different versions to different browsers.

The user agent for MSIE 6 is вЂMozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; .NET CLR 1.1.4322)’

For details of more HTTP request headers, see Quick Reference to HTTP Headers.

In my case I have to use a proxy to access the internet at work. If you attempt to fetch localhost URLs through this proxy it blocks them. IE is set to use the proxy, which urllib picks up on. In order to test scripts with a localhost server, I have to prevent urllib from using the proxy.

urllib opener for SSL proxy (CONNECT method): ASPN Cookbook Recipe.

Источник

![[Resolved] NameError: Name _mysql is Not Defined](https://www.pythonpool.com/wp-content/uploads/2023/01/nameerror-name-_mysql-is-not-defined-300x157.webp)